ElasticSearch7.7.1安装分词器——ik分词器和hanlp分词器

背 景

之所以选择用ES,自然是看重了她的倒排所以,倒排索引又必然关联到分词的逻辑,此处就以中文分词为例以下说到的分词指的就是中文分词,ES本身默认的分词是将每个汉字逐个分开,具体如下,自然是很弱的,无法满足业务需求,那么就需要把那些优秀的分词器融入到ES中来,业界比较好的中文分词器排名如下,hanlp> ansj >结巴>ik>smart chinese analysis;

博主这里就选两种比较常用的讲解hanlp和ik ,hanlp在业界名声最响,ik是官方推荐和ES版本同步更新的使用最多的分词器,并且举例比较下他们的功能;

断句对比效果

默认的分词器效果;

GET /_analyze

{

"text": "林俊杰在上海市开演唱会啦"

}

# 结果

{

"tokens" : [

{

"token" : "林",

"start_offset" : 0,

"end_offset" : 1,

"type" : "" ,

"position" : 0

},

{

"token" : "俊",

"start_offset" : 1,

"end_offset" : 2,

"type" : "" ,

"position" : 1

},

{

"token" : "杰",

"start_offset" : 2,

"end_offset" : 3,

"type" : "" ,

"position" : 2

},

{

"token" : "在",

"start_offset" : 3,

"end_offset" : 4,

"type" : "" ,

"position" : 3

},

{

"token" : "上",

"start_offset" : 4,

"end_offset" : 5,

"type" : "" ,

"position" : 4

},

{

"token" : "海",

"start_offset" : 5,

"end_offset" : 6,

"type" : "" ,

"position" : 5

},

{

"token" : "市",

"start_offset" : 6,

"end_offset" : 7,

"type" : "" ,

"position" : 6

},

{

"token" : "开",

"start_offset" : 7,

"end_offset" : 8,

"type" : "" ,

"position" : 7

},

{

"token" : "演",

"start_offset" : 8,

"end_offset" : 9,

"type" : "" ,

"position" : 8

},

{

"token" : "唱",

"start_offset" : 9,

"end_offset" : 10,

"type" : "" ,

"position" : 9

},

{

"token" : "会",

"start_offset" : 10,

"end_offset" : 11,

"type" : "" ,

"position" : 10

},

{

"token" : "啦",

"start_offset" : 11,

"end_offset" : 12,

"type" : "" ,

"position" : 11

}

]

}

ik分词器效果,这里以ik_smart为例;

GET /_analyze

{

"text": "林俊杰在上海市开演唱会啦",

"analyzer": "ik_smart"

}

# 结果

{

"tokens" : [

{

"token" : "林俊杰",

"start_offset" : 0,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "在上",

"start_offset" : 3,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "海市",

"start_offset" : 5,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "开",

"start_offset" : 7,

"end_offset" : 8,

"type" : "CN_CHAR",

"position" : 3

},

{

"token" : "演唱会",

"start_offset" : 8,

"end_offset" : 11,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "啦",

"start_offset" : 11,

"end_offset" : 12,

"type" : "CN_CHAR",

"position" : 5

}

]

}

hanlp分词器效果,这里以hanlp默认分词器为例;

GET /_analyze

{

"text": "林俊杰在上海市开演唱会啦",

"analyzer": "hanlp"

}

# 结果如下

{

"tokens" : [

{

"token" : "林俊杰",

"start_offset" : 0,

"end_offset" : 3,

"type" : "nr",

"position" : 0

},

{

"token" : "在",

"start_offset" : 3,

"end_offset" : 4,

"type" : "p",

"position" : 1

},

{

"token" : "上海市",

"start_offset" : 4,

"end_offset" : 7,

"type" : "ns",

"position" : 2

},

{

"token" : "开",

"start_offset" : 7,

"end_offset" : 8,

"type" : "v",

"position" : 3

},

{

"token" : "演唱会",

"start_offset" : 8,

"end_offset" : 11,

"type" : "n",

"position" : 4

},

{

"token" : "啦",

"start_offset" : 11,

"end_offset" : 12,

"type" : "y",

"position" : 5

}

]

}

断句层面,hanlp还是要强于ik的;

ik安装

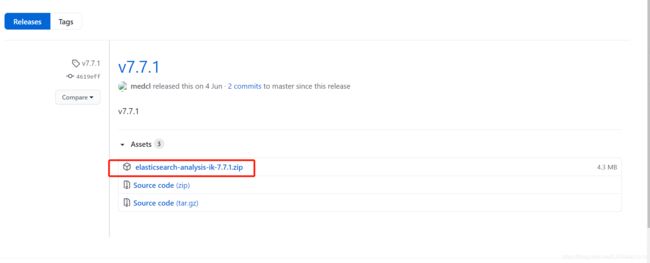

- 官网找到和ES版本的

elasticsearch-analysis-ik-7.7.1.zip,下载安装zip包,如图1;

- 将下载的

elasticsearch-analysis-ik-7.7.1.zip上传到elasticsearch 的安装目录下的plugins下,如我的是/usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins,当然,你集群要是网速不错,也可以在家此文件夹下直接下载,省去上传的工作;

cd /usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins

#直接下载指令

wget https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v7.7.1/elasticsearch-analysis-ik-7.7.1.zip

- 解压

/usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins``下的elasticsearch-analysis-ik-7.7.1.zip`包,指令如下;

#因为是zip,如果报错unzip不是内部指令。说明没安装unzip需要先安装,如果已安装,直接跳过这里

yum install zip

yum install unzip

#新建id文件夹

mkdir ik

#将zip包移入刚刚新建ik文件夹呢

mv ./elasticsearch-analysis-ik-7.7.1.zip ik/

#进入ik文件夹

cd ik

#解压

unzip elasticsearch-analysis-ik-7.7.1.zip

#解压后确保里面的问价如下

total 5828

-rwxr-xr-x 1 hadoop supergroup 263965 Aug 5 18:57 commons-codec-1.9.jar

-rwxr-xr-x 1 hadoop supergroup 61829 Aug 5 18:57 commons-logging-1.2.jar

drwxrwxrwx 2 hadoop supergroup 299 Aug 5 18:57 config

-rwxr-xr-x 1 hadoop supergroup 54599 Aug 5 18:57 elasticsearch-analysis-ik-7.7.1.jar

-rwxr-xr-x 1 hadoop supergroup 4504441 Aug 5 18:57 elasticsearch-analysis-ik-7.7.1.zip

-rwxr-xr-x 1 hadoop supergroup 736658 Aug 5 18:57 httpclient-4.5.2.jar

-rwxr-xr-x 1 hadoop supergroup 326724 Aug 5 18:57 httpcore-4.4.4.jar

-rwxr-xr-x 1 hadoop supergroup 1805 Aug 5 18:57 plugin-descriptor.properties

-rwxr-xr-x 1 hadoop supergroup 125 Aug 5 18:57 plugin-security.policy

#赋权

chmod -R 777 ./*

#切换到es的安装目录

cd /usr/local/tools/elasticsearch/elasticsearch-7.7.1/

#查看是否安装完成

bin/elasticsearch-plugin list

#返回结果

future versions of Elasticsearch will require Java 11; your Java version from [/usr/local/tools/java/jdk1.8.0_211/jre] does not meet this requirement

ik

- 重启es,让分词器生效,操作shell如下;

# 利用jps查看elasticsearch的守护进程

jps

#结果

2497 Kafka

2609 QuorumPeerMain

23906 Elasticsearch

32282 NodeManager

2428 Jps

7341 Worker

2126 CoarseGrainedExecutorBackend

#杀死elasticsearch的守护进程

kill -9 23906

#重启启动es

bin/elasticsearch -d

- 确保整个es集群上的每台机器都操作了以上步骤后,就可以在kibana上测试了,kibana RESTFul风格的测试语句如下;

GET /_analyze

{

"text": "林俊杰在上海市开演唱会啦",

"analyzer": "ik_smart"

}

# 结果

{

"tokens" : [

{

"token" : "林俊杰",

"start_offset" : 0,

"end_offset" : 3,

"type" : "CN_WORD",

"position" : 0

},

{

"token" : "在上",

"start_offset" : 3,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 1

},

{

"token" : "海市",

"start_offset" : 5,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 2

},

{

"token" : "开",

"start_offset" : 7,

"end_offset" : 8,

"type" : "CN_CHAR",

"position" : 3

},

{

"token" : "演唱会",

"start_offset" : 8,

"end_offset" : 11,

"type" : "CN_WORD",

"position" : 4

},

{

"token" : "啦",

"start_offset" : 11,

"end_offset" : 12,

"type" : "CN_CHAR",

"position" : 5

}

]

}

更多的ik分词器结合es的使用,请查考ik的官网readme教程:传送门

hanlp安装

hanlp在es的使用有很多人在做,版本相对比较乱,博主也是找了好几个版本,终于选了一个博主用的来的,hanlp的安装稍微会比ik繁琐一丢丢,所以大家也稍微耐心点;

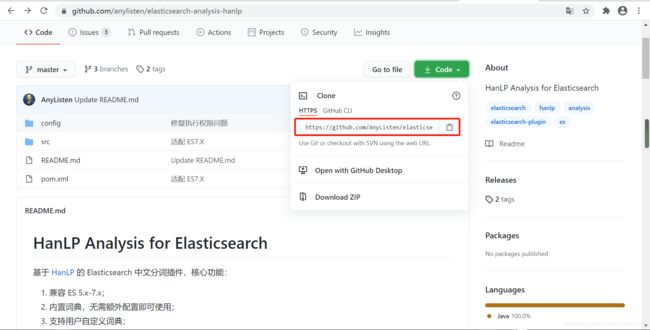

hanlp并没有做到和ES版本的同步更新,所以遇到较新的版本,则需要自己编译源码打包!比如我们的ElasticSearch7.7.1就是目前(20201225)没有release版本!而且hanlp分词器不能直接找hanlp包,用不了,而是要找和elasticsearch兼容的elasticsearch-analysis-hanlp

-

利用git,在文件夹内

git clone https://github.com/AnyListen/elasticsearch-analysis-hanlp.git,再利用java的开发工具IDEA或者eclipse打开项目,打开 pom.xml 文件,修改7.0.0 为需要的 ES 版本; -

这个git项目的老哥太大意了,留了个bug,如下图3d的文件内缺少两个参数

name,你把它补全加上,不然编译报错,然后使用 mvn package 生产打包文件,最终文件在 target/release 文件夹下,打包完成后,使用离线方式安装即可。

.

- 在es的插件目录下

/usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins新建`hanlp1文件夹,开始离线安装,代码如下;

#进入es插件目录

cd /usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins

#新建hanlp文件夹并进入

mkdir hanlp

chmod 755 hanlp

cd hanlp

#将之前重新编译打包好的 target/release下的elasticsearch-analysis-hanlp-7.7.1.zip上传到新建的hanlp目录下解压

unzip elasticsearch-analysis-hanlp-7.7.1.zip

#解压后目录如下

-rwxr-xr-x 1 hadoop supergroup 33498 Dec 24 15:24 elasticsearch-analysis-hanlp-7.7.1.jar

-rw-r--r-- 1 hadoop supergroup 7747506 Dec 24 15:24 elasticsearch-analysis-hanlp-7.7.1.zip

-rwxr-xr-x 1 hadoop supergroup 7971652 Dec 24 15:24 hanlp-portable-1.7.3.jar

-rwxr-xr-x 1 hadoop supergroup 2493 Dec 24 15:24 hanlp.properties

-rwxr-xr-x 1 hadoop supergroup 1117 Dec 24 15:24 plugin-descriptor.properties

-rwxr-xr-x 1 hadoop supergroup 88 Dec 24 15:24 plugin.properties

-rwxr-xr-x 1 hadoop supergroup 414 Dec 24 15:24 plugin-security.policy

#赋权

chmod -R 755 ./*

#利用vi修改hanlp.properties里面的root=的值,为es的hanlp插件安装目录,如下

root=/usr/local/tools/elasticsearch/elasticsearch-7.7.1/plugins/hanlp/

#wq!保存hanlp.properties的内容

#汇到es的安装目录查看hanlp分词器是否成功

cd /usr/local/tools/elasticsearch/elasticsearch-7.7.1/

bin/elasticsearch-plugin list

#返回结果

future versions of Elasticsearch will require Java 11; your Java version from [/usr/local/tools/java/jdk1.8.0_211/jre] does not meet this requirement

hanlp

ik

- 重启es,让分词器生效,操作shell如下;

# 利用jps查看elasticsearch的守护进程

jps

#结果

2497 Kafka

2609 QuorumPeerMain

24812 Elasticsearch

32282 NodeManager

2428 Jps

7341 Worker

2126 CoarseGrainedExecutorBackend

#杀死elasticsearch的守护进程

kill -9 24812

#重启启动es

bin/elasticsearch -d

- 确保整个es集群上的每台机器都操作了以上步骤后,就可以在kibana上测试了,kibana RESTFul风格的测试语句如下;

GET /_analyze

{

"text": "林俊杰在上海市开演唱会啦",

"analyzer": "hanlp"

}

# 结果如下

{

"tokens" : [

{

"token" : "林俊杰",

"start_offset" : 0,

"end_offset" : 3,

"type" : "nr",

"position" : 0

},

{

"token" : "在",

"start_offset" : 3,

"end_offset" : 4,

"type" : "p",

"position" : 1

},

{

"token" : "上海市",

"start_offset" : 4,

"end_offset" : 7,

"type" : "ns",

"position" : 2

},

{

"token" : "开",

"start_offset" : 7,

"end_offset" : 8,

"type" : "v",

"position" : 3

},

{

"token" : "演唱会",

"start_offset" : 8,

"end_offset" : 11,

"type" : "n",

"position" : 4

},

{

"token" : "啦",

"start_offset" : 11,

"end_offset" : 12,

"type" : "y",

"position" : 5

}

]

}

**更多的hanlp分词器结合es的使用,请查考hanlp某一派系的的官网readme教程:[传送门](https://github.com/anylisten/elasticsearch-analysis-hanlp)**

## ==专有名词对比效果==

默认的分词器效果;

```json

GET /_analyze

{

"text": "中国移动"

}

#结果

{

"tokens" : [

{

"token" : "中",

"start_offset" : 0,

"end_offset" : 1,

"type" : "",

"position" : 0

},

{

"token" : "国",

"start_offset" : 1,

"end_offset" : 2,

"type" : "",

"position" : 1

},

{

"token" : "移",

"start_offset" : 2,

"end_offset" : 3,

"type" : "",

"position" : 2

},

{

"token" : "动",

"start_offset" : 3,

"end_offset" : 4,

"type" : "",

"position" : 3

}

]

}

ik分词器效果,这里以ik_smart为例;

GET /_analyze

{

"text": "中国移动",

"analyzer": "ik_smart"

}

#结果

{

"tokens" : [

{

"token" : "中国移动",

"start_offset" : 0,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 0

}

]

}

hanlp分词器效果,这里以hanlp默认分词器为例;

GET /_analyze

{

"text": "中国移动",

"analyzer": "hanlp"

}

#结果如下

{

"tokens" : [

{

"token" : "中国",

"start_offset" : 0,

"end_offset" : 2,

"type" : "ns",

"position" : 0

},

{

"token" : "移动",

"start_offset" : 2,

"end_offset" : 4,

"type" : "vn",

"position" : 1

}

]

}

专有名词上,hanlp和ik的各有特殊,读者也可自己多测试几轮,而且ik和hanlp自带网页版的在线分词器,只需要百度搜索ik活hanlp在线分词即可使用;

维护自己的词典

当然不论采用哪种分词器,都不能一劳永逸解决所有的分词匹配需求,特别是针对某些特有的分词需求,如当搜索自家公司或者自家公司产品时,期望他得分靠前,这个时候就需要维护自己的词典,ik和hanlp都支持维护自己的词典,即当你规定某个词为一体时,该词不会再做细分;具体操作可以查看各自官网的readme文件有说明。