- 日更006 终极训练营day3

懒cici

人生创业课(2)今天的主题:学习方法一:遇到有用的书,反复读,然后结合自身实际,列践行清单,不要再写读书笔记思考这本书与我有什么关系,我在哪些地方能用到,之后我该怎么用方法二:读完书没映像怎么办?训练你的大脑,方法:每读完一遍书,立马合上书,做一场分享,几分钟都行对自己的学习要求太低,要逼自己方法三:学习深度不够怎么办?找到细分领域的榜样,把他们的文章、书籍、产品都体验一遍,成为他们的超级用户,向

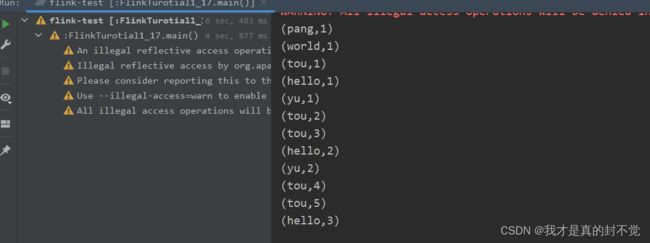

- 实时数据流计算引擎Flink和Spark剖析

程小舰

flinkspark数据库kafkahadoop

在过去几年,业界的主流流计算引擎大多采用SparkStreaming,随着近两年Flink的快速发展,Flink的使用也越来越广泛。与此同时,Spark针对SparkStreaming的不足,也继而推出了新的流计算组件。本文旨在深入分析不同的流计算引擎的内在机制和功能特点,为流处理场景的选型提供参考。(DLab数据实验室w.x.公众号出品)一.SparkStreamingSparkStreamin

- 【花了N长时间读《过犹不及》,不断练习,可以越通透】

君君Love

我已经记不清花了多长时间去读《过犹不及》,读书笔记都写了42页,这算是读得特别精细的了。是一本难得的好书,虽然书中很多内容和圣经吻合,我不是基督徒,却觉得这样的文字值得细细品味,和我们的生活息息相关。我是个界线建立不牢固的人,常常愧疚,常常害怕他人的愤怒,常常不懂拒绝,还有很多时候表达不了自己真实的感受,心里在说不嘴里却在说好……这本书给我很多的启示,让我学会了怎样去建立属于自己的清晰的界限。建立

- 基于redis的Zset实现作者的轻量级排名

周童學

Javaredis数据库缓存

基于redis的Zset实现轻量级作者排名系统在今天的技术架构中,Redis是一种广泛使用的内存数据存储系统,尤其在需要高效检索和排序的场景中表现优异。在本篇博客中,我们将深入探讨如何使用Redis的有序集合(ZSet)构建一个高效的笔记排行榜系统,并提供相关代码示例和详细的解析。1.功能背景与需求假设我们有一个笔记分享平台,用户可以发布各种笔记,系统需要根据用户发布的笔记数量来生成一个实时更新的

- 常规笔记本和加固笔记本的区别

luchengtech

电脑三防笔记本加固计算机加固笔记本

在现代科技产品中,笔记本电脑因其便携性和功能性被广泛应用。根据使用场景和需求的不同,笔记本可分为常规笔记本和加固笔记本,二者在多个方面存在显著区别。适用场景是区分二者的重要标志。常规笔记本主要面向普通消费者和办公人群,适用于家庭娱乐、日常办公、学生学习等相对稳定的室内环境。比如,人们在家用它追剧、处理文档,学生在教室用它完成作业。而加固笔记本则专为特殊行业设计,像军事、野外勘探、工业制造、交通运输

- 第八课: 写作出版你最关心的出书流程和市场分析(无戒学堂复盘)

人在陌上

今天是周六,恰是圣诞节。推掉了两个需要凑腿的牌局,在一个手机,一个笔记本,一台电脑,一杯热茶的陪伴下,一个人静静地回听无戒学堂的最后一堂课。感谢这一个月,让自己的习惯开始改变,至少,可以静坐一个下午而不觉得乏味枯燥难受了,要为自己点个赞。我深知,这最后一堂课的内容,以我的资质和毅力,可能永远都用不上。但很明显,无戒学堂是用了心的,毕竟,有很多优秀学员,已经具备了写作能力,马上就要用到这堂课的内容。

- python笔记14介绍几个魔法方法

抢公主的大魔王

pythonpython

python笔记14介绍几个魔法方法先声明一下各位大佬,这是我的笔记。如有错误,恳请指正。另外,感谢您的观看,谢谢啦!(1).__doc__输出对应的函数,类的说明文档print(print.__doc__)print(value,...,sep='',end='\n',file=sys.stdout,flush=False)Printsthevaluestoastream,ortosys.std

- Deepseek技术深化:驱动大数据时代颠覆性变革的未来引擎

荣华富贵8

springboot搜索引擎后端缓存redis

在大数据时代,信息爆炸和数据驱动的决策逐渐重塑各行各业。作为一项前沿技术,Deepseek正在引领新一轮技术革新,颠覆传统数据处理与分析方式。本文将从理论原理、应用场景和前沿代码实践三个层面,深入剖析Deepseek技术如何为大数据时代提供颠覆性变革的解决方案。一、技术背景与核心思想1.1大数据挑战与机遇在数据量呈指数级增长的背景下,传统数据处理方法面临数据存储、计算效率和信息提取精度的诸多挑战。

- 《感官品牌》读书笔记 1

西红柿阿达

原文:最近我在东京街头闲逛时,与一位女士擦肩而过,我发现她的香水味似曾相识。“哗”的一下,记亿和情感立刻像潮水般涌了出来。这个香水味把我带回了15年前上高中的时候,我的一位亲密好友也是用这款香水。一瞬间,我呆站在那里,东京的街景逐渐淡出,取而代之的是我年少时的丹麦以及喜悦、悲伤、恐惧、困惑的记忆。我被这熟悉的香水味征服了。感想:感官是有记忆的,你所听到,看到,闻到过的有代表性的事件都会在大脑中深深

- 我不想再当知识的搬运工

楚煜楚尧

因为学校课题研究的需要,这个暑假我依然需要完成一本书的阅读笔记。我选的是管建刚老师的《习课堂十讲》。这本书,之前我读过,所以重读的时候,感到很亲切,摘抄起来更是非常得心应手。20页,40面,抄了十天,终于在今天大功告成了。这对之前什么事都要一拖再拖的我来说,是破天荒的改变。我发现至从认识小尘老师以后,我的确发生了很大的改变。遇到必须做却总是犹豫不去做的事,我学会了按照小尘老师说的那样,在心里默默数

- 大数据之路:阿里巴巴大数据实践——大数据领域建模综述

为什么需要数据建模核心痛点数据冗余:不同业务重复存储相同数据(如用户基础信息),导致存储成本激增。计算资源浪费:未经聚合的明细数据直接参与计算(如全表扫描),消耗大量CPU/内存资源。数据一致性缺失:同一指标在不同业务线的口径差异(如“活跃用户”定义不同),引发决策冲突。开发效率低下:每次分析需重新编写复杂逻辑,无法复用已有模型。数据建模核心价值性能提升:分层设计(ODS→DWD→DWS→ADS)

- 20210517坚持分享53天读书摘抄笔记 非暴力沟通——爱自己

f79a6556cb19

让生命之花绽放在赫布·加德纳(HerbGardner)编写的《一千个小丑》一剧中,主人公拒绝将他12岁的外甥交给儿童福利院。他郑重地说道:“我希望他准确无误地知道他是多么特殊的生命,要不,他在成长的过程中将会忽视这一点。我希望他保持清醒,并看到各种奇妙的可能。我希望他知道,一旦有机会,排除万难给世界一点触动是值得的。我还希望他知道为什么他是一个人,而不是一张椅子。”然而,一旦负面的自我评价使我们看

- Unity学习笔记1

zy_777

通过一个星期的简单学习,初步了解了下unity,unity的使用,以及场景的布局,UI,以及用C#做一些简单的逻辑。好记性不如烂笔头,一些关键帧还是记起来比较好,哈哈,不然可能转瞬即逝了,(PS:纯小白观点,unity大神可以直接忽略了)一:MonoBehaviour类的初始化1,Instantiate()创建GameObject2,通过Awake()和Start()来做初始化3,Update、L

- 大数据技术笔记—spring入门

卿卿老祖

篇一spring介绍spring.io官网快速开始Aop面向切面编程,可以任何位置,并且可以细致到方法上连接框架与框架Spring就是IOCAOP思想有效的组织中间层对象一般都是切入service层spring组成前后端分离已学方式,前后台未分离:Spring的远程通信:明日更新创建第一个spring项目来源:科多大数据

- Django学习笔记(一)

学习视频为:pythondjangoweb框架开发入门全套视频教程一、安装pipinstalldjango==****检查是否安装成功django.get_version()二、django新建项目操作1、新建一个项目django-adminstartprojectproject_name2、新建APPcdproject_namedjango-adminstartappApp注:一个project

- python学习笔记(汇总)

朕的剑还未配妥

python学习笔记整理python学习开发语言

文章目录一.基础知识二.python中的数据类型三.运算符四.程序的控制结构五.列表六.字典七.元组八.集合九.字符串十.函数十一.解决bug一.基础知识print函数字符串要加引号,数字可不加引号,如print(123.4)print('小谢')print("洛天依")还可输入表达式,如print(1+3)如果使用三引号,print打印的内容可不在同一行print("line1line2line

- Redis 分布式锁深度解析:过期时间与自动续期机制

爱恨交织围巾

分布式事务redis分布式数据库微服务学习go

Redis分布式锁深度解析:过期时间与自动续期机制在分布式系统中,Redis分布式锁的可靠性很大程度上依赖于对锁生命周期的管理。上一篇文章我们探讨了分布式锁的基本原理,今天我们将聚焦于一个关键话题:如何通过合理设置过期时间和实现自动续期机制,来解决分布式锁中的死锁与锁提前释放问题。一、为什么过期时间是分布式锁的生命线?你的笔记中提到"服务挂掉时未删除锁可能导致死锁",这正是过期时间要解决的核心问题

- 08.学习闭环三部曲:预习、实时学习、复习

0058b195f4dc

人生就是一本效率手册,你怎样对待时间,时间就会给你同比例的回馈。单点突破法。预习,实时学习,复习。1、预习:凡事提前【计划】(1)前一晚设置三个当日目标。每周起始于每周日。(2)提前学习。预习法进行思考。预不预习效果相差20%,预习法学会提问。(3)《学会提问》。听电子书。2.实时学习(1)(10%)相应场景,思维导图,快速笔记。灵感笔记。(2)大纲,基本记录,总结篇。3.复习法则,(70%),最

- 《如何写作》文心读书笔记

逆熵反弹力

《文心》这本书的文体是以讲故事的形式来讲解如何写作的,读起来不会觉得刻板。读完全书惊叹大师的文笔如此之好,同时感叹与此书相见恨晚。工作了几年发现表达能力在生活中越来越重要,不管是口语还是文字上的表达。有时候甚至都不能把自己想说的东西表达清楚,平时也有找过一些书来看,想通过提升自己的阅读量来提高表达能力。但是看了这么久的书发现见效甚微,这使得我不得不去反思,该怎么提高表达能力。因此打算从写作入手。刚

- SQL笔记纯干货

AI入门修炼

oracle数据库sql

软件:DataGrip2023.2.3,phpstudy_pro,MySQL8.0.12目录1.DDL语句(数据定义语句)1.1数据库操作语言1.2数据表操作语言2.DML语句(数据操作语言)2.1增删改2.2题2.3备份表3.DQL语句(数据查询语言)3.1查询操作3.2题一3.3题二4.多表详解4.1一对多4.2多对多5.多表查询6.窗口函数7.拓展:upsert8.sql注入攻击演示9.拆表

- 《4D卓越团队》习书笔记 第十六章 创造力与投入

Smiledmx

《4D卓越团队-美国宇航局的管理法则》(查理·佩勒林)习书笔记第十六章创造力与投入本章要点:务实的乐观不是盲目乐观,而是带来希望的乐观。用真相激起希望吉姆·科林斯在《从优秀到卓越》中写道:“面对残酷的现实,平庸的公司选择解释和逃避,而不是正视。”创造你想要的项目1.你必须从基于真相的事实出发。正视真相很难,逃避是人类的本性。2.面对现实,你想创造什么?-我想利用现有资源创造一支精干、高效、积极的橙

- 大数据精准获客并实现高转化的核心思路和实现方法

2401_88470328

大数据精准获客数据分析数据挖掘大数据需求分析bigdata

大数据精准获客并实现高转化的核心思路和实现方法大数据精准获客并实现高转化的核心思路和实现方法在当今信息爆炸的时代,企业如何通过海量的数据精准获取潜在客户,并提高转化率,已经成为营销策略中的关键环节。大数据精准获客的核心思路在于数据驱动、多渠道触达以及优化转化路径,从而实现高效的市场推广和客户转化。数据驱动原理和机制数据驱动的核心在于通过分析用户行为数据,挖掘潜在客户的需求和喜好,从而制定更加精准的

- 一地鸡毛—一个中年男人的日常2021241

随止心语所自欲律

2021年8月31日,星期二,阴有小雨。早起5:30,跑步10公里。空气清新,烟雨朦胧,远山如黛,烟雾缭绕,宛若仙境。空气中湿气很大,朦胧细雨拍打在脸上,甚是舒服,跑步的人明显减少。早上开会,领导说起逐年大幅度下滑的工作业绩,越说越激动,说得脸红脖子粗。开完会又讨论了一下会议精神,心情也有波动,学习热情不高。心里还有一个大事,是今日大数据分析第1次考试,因自己前期没学,而且计算机编程方面没有任何基

- 2020-12-10

生活有鱼_727f

今日汇总:1.学习了一只舞蹈2.专业知识抄了一遍3.讲师训作业完成今日不足之处:1.时间没管理好,浪费了很多时间到现在才做完明日必做:1.讲师训作业完成2.群消息做好笔记3.宽带安装

- 【Druid】学习笔记

fixAllenSun

学习笔记oracle

【Druid】学习笔记【一】简介【1】简介【2】数据库连接池(1)能解决的问题(2)使用数据库连接池的好处【3】监控(1)监控信息采集的StatFilter(2)监控不影响性能(3)SQL参数化合并监控(4)执行次数、返回行数、更新行数和并发监控(5)慢查监控(6)Exception监控(7)区间分布(8)内置监控DEMO【4】Druid基本配置参数介绍【5】Druid相比于其他数据库连接池的优点

- 微信公众号写作:如何通过文字变现?

氧惠爱高省

微信公众号已成为许多人分享知识、表达观点的重要平台。随着自媒体的发展,越来越多的人开始关注微信公众号上写文章如何挣钱的问题。本文将详细探讨微信公众号写作的盈利模式,帮助广大写作者实现文字变现的梦想。公众号流量主就找善士导师(shanshi2024)公众号:「善士笔记」主理人,《我的亲身经历,四个月公众号流量主从0到日入过万!》公司旗下管理800+公众号矩阵账号。代表案例如:爸妈领域、职场道道、国学

- 流利说懂你英语笔记要点句型·核心课·Level 8·Unit 3·Part 2·Video 1·Healing Architecture 1

羲之大鹅video

HealingArchitecture1EveryweekendforaslongasIcanremember,myfatherwouldgetuponaSaturday,putonawornsweatshirtandhe'dscrapeawayatthesqueakyoldwheelofahousethatwelivedin.ps:从我记事起,每个周末,我父亲都会在周六起床,穿上一件破旧的运动衫

- java学习笔记8

幸福,你等等我

学习笔记java

一、异常处理Error:错误,程序员无法处理,如OOM内存溢出错误、内存泄漏...会导出程序崩溃1.异常:程序中一些程序自身处理不了的特殊情况2.异常类Exception3.异常的分类:(1).检查型异常(编译异常):在编译时就会抛出的异常(代码上会报错),需要在代码中编写处理方式(和程序之外的资源访问)直接继承Exception(2).运行时异常:在代码运行阶段可能会出现的异常,可以不用明文处理

- 2025.07 Java入门笔记01

殷浩焕

笔记

一、熟悉IDEA和Java语法(一)LiuCourseJavaOOP1.一直在用C++开发,python也用了些,Java是真的不熟,用什么IDE还是问的同事;2.一开始安装了jdk-23,拿VSCode当编辑器,在cmd窗口编译运行,也能玩;但是想正儿八经搞项目开发,还是需要IDE;3.安装了IDEA社区版:(1)IDE通常自带对应编程语言的安装包,例如IDEA自带jbr-21(和jdk是不同的

- Java注解笔记

m0_65470938

java开发语言

一、什么是注解Java注解又称Java标注,是在JDK5时引入的新特性,注解(也被称为元数据)Javaa注解它提供了一种安全的类似注释的机制,用来将任何的信息或元数据(metadata)与程元素类、方法、成员变量等)进行关联二、注解的应用1.生成文档这是最常见的,也是iava最早提供的注解2.在编译时进行格式检查,如@Overide放在方法前,如果你这个方法并不是看盖了超类Q方法,则编译时就能检查

- 多线程编程之理财

周凡杨

java多线程生产者消费者理财

现实生活中,我们一边工作,一边消费,正常情况下会把多余的钱存起来,比如存到余额宝,还可以多挣点钱,现在就有这个情况:我每月可以发工资20000万元 (暂定每月的1号),每月消费5000(租房+生活费)元(暂定每月的1号),其中租金是大头占90%,交房租的方式可以选择(一月一交,两月一交、三月一交),理财:1万元存余额宝一天可以赚1元钱,

- [Zookeeper学习笔记之三]Zookeeper会话超时机制

bit1129

zookeeper

首先,会话超时是由Zookeeper服务端通知客户端会话已经超时,客户端不能自行决定会话已经超时,不过客户端可以通过调用Zookeeper.close()主动的发起会话结束请求,如下的代码输出内容

Created /zoo-739160015

CONNECTEDCONNECTED

.............CONNECTEDCONNECTED

CONNECTEDCLOSEDCLOSED

- SecureCRT快捷键

daizj

secureCRT快捷键

ctrl + a : 移动光标到行首ctrl + e :移动光标到行尾crtl + b: 光标前移1个字符crtl + f: 光标后移1个字符crtl + h : 删除光标之前的一个字符ctrl + d :删除光标之后的一个字符crtl + k :删除光标到行尾所有字符crtl + u : 删除光标至行首所有字符crtl + w: 删除光标至行首

- Java 子类与父类这间的转换

周凡杨

java 父类与子类的转换

最近同事调的一个服务报错,查看后是日期之间转换出的问题。代码里是把 java.sql.Date 类型的对象 强制转换为 java.sql.Timestamp 类型的对象。报java.lang.ClassCastException。

代码:

- 可视化swing界面编辑

朱辉辉33

eclipseswing

今天发现了一个WindowBuilder插件,功能好强大,啊哈哈,从此告别手动编辑swing界面代码,直接像VB那样编辑界面,代码会自动生成。

首先在Eclipse中点击help,选择Install New Software,然后在Work with中输入WindowBui

- web报表工具FineReport常用函数的用法总结(文本函数)

老A不折腾

finereportweb报表工具报表软件java报表

文本函数

CHAR

CHAR(number):根据指定数字返回对应的字符。CHAR函数可将计算机其他类型的数字代码转换为字符。

Number:用于指定字符的数字,介于1Number:用于指定字符的数字,介于165535之间(包括1和65535)。

示例:

CHAR(88)等于“X”。

CHAR(45)等于“-”。

CODE

CODE(text):计算文本串中第一个字

- mysql安装出错

林鹤霄

mysql安装

[root@localhost ~]# rpm -ivh MySQL-server-5.5.24-1.linux2.6.x86_64.rpm Preparing... #####################

- linux下编译libuv

aigo

libuv

下载最新版本的libuv源码,解压后执行:

./autogen.sh

这时会提醒找不到automake命令,通过一下命令执行安装(redhat系用yum,Debian系用apt-get):

# yum -y install automake

# yum -y install libtool

如果提示错误:make: *** No targe

- 中国行政区数据及三级联动菜单

alxw4616

近期做项目需要三级联动菜单,上网查了半天竟然没有发现一个能直接用的!

呵呵,都要自己填数据....我了个去这东西麻烦就麻烦的数据上.

哎,自己没办法动手写吧.

现将这些数据共享出了,以方便大家.嗯,代码也可以直接使用

文件说明

lib\area.sql -- 县及县以上行政区划分代码(截止2013年8月31日)来源:国家统计局 发布时间:2014-01-17 15:0

- 哈夫曼加密文件

百合不是茶

哈夫曼压缩哈夫曼加密二叉树

在上一篇介绍过哈夫曼编码的基础知识,下面就直接介绍使用哈夫曼编码怎么来做文件加密或者压缩与解压的软件,对于新手来是有点难度的,主要还是要理清楚步骤;

加密步骤:

1,统计文件中字节出现的次数,作为权值

2,创建节点和哈夫曼树

3,得到每个子节点01串

4,使用哈夫曼编码表示每个字节

- JDK1.5 Cyclicbarrier实例

bijian1013

javathreadjava多线程Cyclicbarrier

CyclicBarrier类

一个同步辅助类,它允许一组线程互相等待,直到到达某个公共屏障点 (common barrier point)。在涉及一组固定大小的线程的程序中,这些线程必须不时地互相等待,此时 CyclicBarrier 很有用。因为该 barrier 在释放等待线程后可以重用,所以称它为循环的 barrier。

CyclicBarrier支持一个可选的 Runnable 命令,

- 九项重要的职业规划

bijian1013

工作学习

一. 学习的步伐不停止 古人说,活到老,学到老。终身学习应该是您的座右铭。 世界在不断变化,每个人都在寻找各自的事业途径。 您只有保证了足够的技能储

- 【Java范型四】范型方法

bit1129

java

范型参数不仅仅可以用于类型的声明上,例如

package com.tom.lang.generics;

import java.util.List;

public class Generics<T> {

private T value;

public Generics(T value) {

this.value =

- 【Hadoop十三】HDFS Java API基本操作

bit1129

hadoop

package com.examples.hadoop;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoo

- ua实现split字符串分隔

ronin47

lua split

LUA并不象其它许多"大而全"的语言那样,包括很多功能,比如网络通讯、图形界面等。但是LUA可以很容易地被扩展:由宿主语言(通常是C或 C++)提供这些功能,LUA可以使用它们,就像是本来就内置的功能一样。LUA只包括一个精简的核心和最基本的库。这使得LUA体积小、启动速度快,从 而适合嵌入在别的程序里。因此在lua中并没有其他语言那样多的系统函数。习惯了其他语言的字符串分割函

- java-从先序遍历和中序遍历重建二叉树

bylijinnan

java

public class BuildTreePreOrderInOrder {

/**

* Build Binary Tree from PreOrder and InOrder

* _______7______

/ \

__10__ ___2

/ \ /

4

- openfire开发指南《连接和登陆》

开窍的石头

openfire开发指南smack

第一步

官网下载smack.jar包

下载地址:http://www.igniterealtime.org/downloads/index.jsp#smack

第二步

把smack里边的jar导入你新建的java项目中

开始编写smack连接openfire代码

p

- [移动通讯]手机后盖应该按需要能够随时开启

comsci

移动

看到新的手机,很多由金属材质做的外壳,内存和闪存容量越来越大,CPU速度越来越快,对于这些改进,我们非常高兴,也非常欢迎

但是,对于手机的新设计,有几点我们也要注意

第一:手机的后盖应该能够被用户自行取下来,手机的电池的可更换性应该是必须保留的设计,

- 20款国外知名的php开源cms系统

cuiyadll

cms

内容管理系统,简称CMS,是一种简易的发布和管理新闻的程序。用户可以在后端管理系统中发布,编辑和删除文章,即使您不需要懂得HTML和其他脚本语言,这就是CMS的优点。

在这里我决定介绍20款目前国外市面上最流行的开源的PHP内容管理系统,以便没有PHP知识的读者也可以通过国外内容管理系统建立自己的网站。

1. Wordpress

WordPress的是一个功能强大且易于使用的内容管

- Java生成全局唯一标识符

darrenzhu

javauuiduniqueidentifierid

How to generate a globally unique identifier in Java

http://stackoverflow.com/questions/21536572/generate-unique-id-in-java-to-label-groups-of-related-entries-in-a-log

http://stackoverflow

- php安装模块检测是否已安装过, 使用的SQL语句

dcj3sjt126com

sql

SHOW [FULL] TABLES [FROM db_name] [LIKE 'pattern']

SHOW TABLES列举了给定数据库中的非TEMPORARY表。您也可以使用mysqlshow db_name命令得到此清单。

本命令也列举数据库中的其它视图。支持FULL修改符,这样SHOW FULL TABLES就可以显示第二个输出列。对于一个表,第二列的值为BASE T

- 5天学会一种 web 开发框架

dcj3sjt126com

Web框架framework

web framework层出不穷,特别是ruby/python,各有10+个,php/java也是一大堆 根据我自己的经验写了一个to do list,按照这个清单,一条一条的学习,事半功倍,很快就能掌握 一共25条,即便很磨蹭,2小时也能搞定一条,25*2=50。只需要50小时就能掌握任意一种web框架

各类web框架大同小异:现代web开发框架的6大元素,把握主线,就不会迷路

建议把本文

- Gson使用三(Map集合的处理,一对多处理)

eksliang

jsongsonGson mapGson 集合处理

转载请出自出处:http://eksliang.iteye.com/blog/2175532 一、概述

Map保存的是键值对的形式,Json的格式也是键值对的,所以正常情况下,map跟json之间的转换应当是理所当然的事情。 二、Map参考实例

package com.ickes.json;

import java.lang.refl

- cordova实现“再点击一次退出”效果

gundumw100

android

基本的写法如下:

document.addEventListener("deviceready", onDeviceReady, false);

function onDeviceReady() {

//navigator.splashscreen.hide();

document.addEventListener("b

- openldap configuration leaning note

iwindyforest

configuration

hostname // to display the computer name

hostname <changed name> // to change

go to: /etc/sysconfig/network, add/modify HOSTNAME=NEWNAME to change permenately

dont forget to change /etc/hosts

- Nullability and Objective-C

啸笑天

Objective-C

https://developer.apple.com/swift/blog/?id=25

http://www.cocoachina.com/ios/20150601/11989.html

http://blog.csdn.net/zhangao0086/article/details/44409913

http://blog.sunnyxx

- jsp中实现参数隐藏的两种方法

macroli

JavaScriptjsp

在一个JSP页面有一个链接,//确定是一个链接?点击弹出一个页面,需要传给这个页面一些参数。//正常的方法是设置弹出页面的src="***.do?p1=aaa&p2=bbb&p3=ccc"//确定目标URL是Action来处理?但是这样会在页面上看到传过来的参数,可能会不安全。要求实现src="***.do",参数通过其他方法传!//////

- Bootstrap A标签关闭modal并打开新的链接解决方案

qiaolevip

每天进步一点点学习永无止境bootstrap纵观千象

Bootstrap里面的js modal控件使用起来很方便,关闭也很简单。只需添加标签 data-dismiss="modal" 即可。

可是偏偏有时候需要a标签既要关闭modal,有要打开新的链接,尝试多种方法未果。只好使用原始js来控制。

<a href="#/group-buy" class="btn bt

- 二维数组在Java和C中的区别

流淚的芥末

javac二维数组数组

Java代码:

public class test03 {

public static void main(String[] args) {

int[][] a = {{1},{2,3},{4,5,6}};

System.out.println(a[0][1]);

}

}

运行结果:

Exception in thread "mai

- systemctl命令用法

wmlJava

linuxsystemctl

对比表,以 apache / httpd 为例 任务 旧指令 新指令 使某服务自动启动 chkconfig --level 3 httpd on systemctl enable httpd.service 使某服务不自动启动 chkconfig --level 3 httpd off systemctl disable httpd.service 检查服务状态 service h