RNN, LSTM 图文详解

文章目录

- 往期文章链接目录

- Sequence Data

- Why not use a standard neural network for sequence tasks

- RNN

- Different types of RNNs

- Loss function of RNN

- Backpropagation through time

- Vanishing gradients with RNNs

- Advantages and Drawbacks of RNN

- LSTM

- Types of gates

- formulas and illustration of formulas

- Variants of RNNs

- Bi-directional RNN

- Deep RNN

- 往期文章链接目录

往期文章链接目录

Sequence Data

There are many sequence data in applications. Here are some examples

-

Machine translation

- from text sequence to text sequence.

-

Text Summarization

- from text sequence to text sequence.

-

Sentiment classification

- from text sequence to categories.

-

Music Generation

- from nothing or some simple stuff (character, integer, etc) to wave sequence.

-

Name entity recognition (NER)

- From text sequence to label sequence.

Why not use a standard neural network for sequence tasks

-

Inputs, outputs can be different lengths in different examples. This can be solved by standard neural network by paddings with the maximum lengths but it’s not a good solution since there would be too many parameters.

-

Doesn’t share features learned across different positions of text/sequence. Note that Convolutional Neural Network (CNN) is a good example of parameter sharing, so we should have a similar model for sequence data.

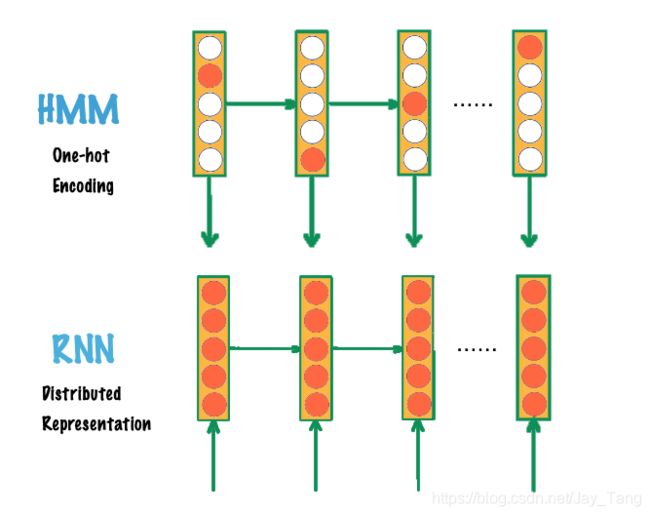

RNN

A Recurrent Neural Network (RNN) can be thought of as multiple copies of the same network, each passing a message to a successor. This chain-like nature reveals that recurrent neural networks are intimately related to sequences. Therefore, they’re the natural architecture of neural network to use for sequence data. Note that it also allows previous outputs to be used as inputs.

For each time step t t t, the activation a < t > a^{

-

a < t > = g 1 ( W a a a < t − 1 > + W a x x < t > + b a ) a^{

}=g_{1}\left(W_{a a} a^{ a<t>=g1(Waaa<t−1>+Waxx<t>+ba)}+W_{a x} x^{ }+b_{a}\right) -

y < t > = g 2 ( W y a a < t > + b y ) y^{

}=g_{2}\left(W_{y a} a^{ y<t>=g2(Wyaa<t>+by)}+b_{y}\right)

These calculation could be visualized in the following figure

Note:

-

dimension of W a a W_{a a} Waa: (number of hidden neurons, number of hidden neurons)

-

dimension of W a x W_{a x} Wax: (number of hidden neurons, length of x x x)

-

dimension of W y a W_{y a} Wya: (length of y y y, number of hidden neurons)

-

The weight matrix W a a W_{a a} Waa is the memory the RNN is trying to maintain from the previous layers.

-

dimension of b a b_a ba: (number of hidden neurons, 1)

-

dimension of b y b_y by: (length of y y y, 1)

We can simplify the notations further;

-

a < t > = g 1 ( W a [ a < t − 1 > , x < t > ] + b a ) a^{

}=g_{1}\left(W_{a} \, [a^{ a<t>=g1(Wa[a<t−1>,x<t>]+ba)}, x^{ }] + b_{a}\right) -

y < t > = g 2 ( W y a < t > + b y ) y^{

}=g_{2}\left(W_{y}\, a^{ y<t>=g2(Wya<t>+by)}+b_{y}\right)

Note:

-

w a w_a wa is w a a w_{aa} waa and w a x w_{ax} wax stacked horizontally.

-

[ a < t − 1 > , x < t > ] [a^{

}, x^{ [a<t−1>,x<t>] is a < t − 1 > a^{}] } a<t−1> and x < t > x^{} x<t> stacked vertically. -

dimension of w a w_a wa: (number of hidden neurons, number of hidden neurons + + + length of x x x)

-

dimension of [ a < t − 1 > , x < t > ] [a^{

}, x^{ [a<t−1>,x<t>]: (number of hidden neurons + + + length of x x x, 1)}]

Different types of RNNs

Loss function of RNN

The loss function L \mathcal{L} L of all time steps is defined based on the loss at every time step as follows:

L ( y ^ , y ) = ∑ t = 1 T y L ( y ^ < t > , y < t > ) \mathcal{L}(\hat{y}, y)=\sum_{t=1}^{T_{y}} \mathcal{L}\left(\hat{y}^{

Backpropagation through time

Backpropagation is done at each point in time. At timestep t t t, the derivative of the loss

L \mathcal{L} L with respect to weight matrix W W W is expressed as follows:

∂ L ( t ) ∂ W = ∑ k = 0 t ∂ L ( t ) ∂ W ∣ ( k ) = ∑ k = 0 t ∂ L ( t ) ∂ y < t > ∂ y < t > ∂ a < t > ∂ a < t > ∂ a < k > ∂ a < k > ∂ W ( 1 ) = ∑ k = 0 t ∂ L ( t ) ∂ y < t > ∂ y < t > ∂ a < t > ( ∏ j = k + 1 t ∂ a < j > ∂ a < j − 1 > ) ∂ a < k > ∂ W ( 2 ) \begin{aligned} \frac{\partial \mathcal{L}^{(t)}}{\partial W} &= \left. \sum_{k=0}^{t} \frac{\partial \mathcal{L}^{(t)}}{\partial W}\right|_{(k)} \\ &= \sum_{k=0}^{t} \frac{\partial \mathcal{L}^{(t)}}{\partial y^{

Note that from ( 1 ) (1) (1) to ( 2 ) (2) (2), we used the chain rule on ∂ a < t > ∂ a < k > \frac{\partial a^{

Vanishing gradients with RNNs

Suppose we are working with language modeling problem and there are two sequences that model tries to learn:

- “The cat, which already ate …, was full”

- “The cats, which already ate …, were full”

The naive RNN is not very good at capturing very long-term dependencies like this. The reason is clear from the above section (Backpropagation through time).

Advantages and Drawbacks of RNN

Advantages:

-

Possibility of processing input of any length.

-

Model size not increasing with size of input.

-

Computation takes into account historical information.

-

Weights are shared across time.

Drawbacks:

-

Computation being slow.

-

Difficulty of accessing information from a long time ago.

-

Cannot consider any future input for the current state.

LSTM

Long Short Term Memory (LSTM) networks are a special kind of RNN, capable of learning long-term dependencies. LSTMs are explicitly designed to avoid the long-term dependency problem. Remembering information for long periods of time is practically their default behavior, not something they struggle to learn.

Types of gates

In order to remedy the vanishing gradient problem, specific gates are used in some types of RNNs and usually have a well-defined purpose. They are usually noted Γ \Gamma Γ and are equal to:

Γ = σ ( W 1 a < t − 1 > + W 2 x < t > + b ) \Gamma=\sigma\left(W_1 a^{

where W 1 , W 2 , b W_1, W_2, b W1,W2,b are coefficients specific to the gate and σ \sigma σ is the sigmoid function. We can also simplify it to

Γ = σ ( W [ a < t − 1 > , x < t > ] + b ) \Gamma=\sigma\left(W \left[a^{

-

Relevance gate Γ r \Gamma_{r} Γr: Drop previous information?

-

Forget gate Γ f \Gamma_{f} Γf: Erase a cell or not?

-

Output gate Γ o \Gamma_{o} Γo: How much to reveal of a cell?

formulas and illustration of formulas

Variants of RNNs

Bi-directional RNN

-

Part of the forward propagation goes from left to right, and part from right to left. Note that this is just a combination of two uni-directional RNN. It can’t strictly learn from “both sides”.

-

To make predictions we use y ^ < t > \hat{y}^{

} y^<t> by using the two activations that come from left and right. -

The blocks here can be any RNN block including the basic RNNs, LSTMs, or GRUs.

-

The disadvantage of Bi-RNNs that you need the entire sequence before you can process it. For example, in live speech recognition if you use BiRNNs you will need to wait for the person who speaks to stop to take the entire sequence and then make your predictions.

Deep RNN

Note: In feed-forward deep nets, there could be 100 100 100 or even 200 200 200 layers. In deep RNNs stacking 3 3 3 layers is already considered deep and expensive to train.

Reference:

- http://colah.github.io/posts/2015-08-Understanding-LSTMs/

- https://github.com/mbadry1/DeepLearning.ai-Summary/tree/master/5-%20Sequence%20Models#recurrent-neural-networks

- https://stanford.edu/~shervine/teaching/cs-230/cheatsheet-recurrent-neural-networks