Spark on hdp yarn cluster踩坑记

集群环境

ambari: HDP-2.6.5.0

spark-2.1.0-bin-hadoop2.7

踩坑一:

NoClassDefFoundError: org/glassfish/jersey/server/spi/ontainer

或者

NoClassDefFoundError:com/sun/jersey/api/client/config/ClientConfig

或者

NoClassDefFoundError:com/sun/jersey/core/util/FeaturesAndProperties

解决方案:

原因是因为发现该类在jersey-client包1.9版本中存在,而spark当前依赖的的jersery版本为2.2,jersey版本升级后,剔除了相关jar包。

# 发现jar中无此类

[root@linux jars]$ pwd

/opt/spark-2.1.0-bin-hadoop2.7/jars

[root@linux jars]$ grep "FeaturesAndProperties" ./ -Rn

[root@linux jars]$ 网上的大部分解决方案是将 /usr/hdp/2.6.5.0-292/hadoop/conf 上的 jersey-client-1.9.jar、jersey-server-1.9.jar 替换掉 spark/jars 下面的 jar包,亲测不可行。其实直接修改 yarn-site.xml 中的配置即可:

yarn.timeline-service.enabled

false

重启yarn集群,成功!

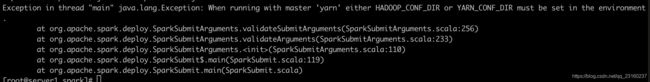

踩坑二:

Exception in thread "main" java.lang.Exception: When running with master 'yarn' either HADOOP_CONF_DIR or YARN_CONF_DIR must be set in the environment

解决方案:

在 conf/spark-env.sh 中添加如下配置:

export HADOOP_CONF_DIR=/usr/hdp/2.6.5.0-292/hadoop/conf

export SPARK_HOME=/opt/spark

踩坑三:

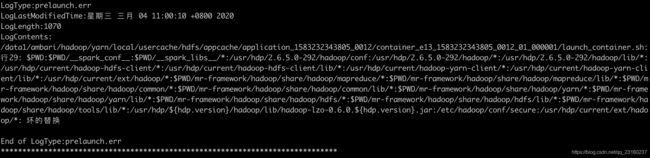

spark on yarn client模式启动正常,但是 cluster 模式启动失败,报错:

/data1/ambari/hadoop/yarn/local/usercache/hdfs/appcache/application_1583232343805_0012/container_e13_1583232343805_0012_01_000001/launch_container.sh:行29: $PWD:$PWD/__spark_conf__:$PWD/__spark_libs__/*:/usr/hdp/2.6.5.0-292/hadoop/conf:/usr/hdp/2.6.5.0-292/hadoop/*:/usr/hdp/2.6.5.0-292/hadoop/lib/*:/usr/hdp/current/hadoop-hdfs-client/*:/usr/hdp/current/hadoop-hdfs-client/lib/*:/usr/hdp/current/hadoop-yarn-client/*:/usr/hdp/current/hadoop-yarn-client/lib/*:/usr/hdp/current/ext/hadoop/*:$PWD/mr-framework/hadoop/share/hadoop/mapreduce/*:$PWD/mr-framework/hadoop/share/hadoop/mapreduce/lib/*:$PWD/mr-framework/hadoop/share/hadoop/common/*:$PWD/mr-framework/hadoop/share/hadoop/common/lib/*:$PWD/mr-framework/hadoop/share/hadoop/yarn/*:$PWD/mr-framework/hadoop/share/hadoop/yarn/lib/*:$PWD/mr-framework/hadoop/share/hadoop/hdfs/*:$PWD/mr-framework/hadoop/share/hadoop/hdfs/lib/*:$PWD/mr-framework/hadoop/share/hadoop/tools/lib/*:/usr/hdp/${hdp.version}/hadoop/lib/hadoop-lzo-0.6.0.${hdp.version}.jar:/etc/hadoop/conf/secure:/usr/hdp/current/ext/hadoop/*: 坏的替换

End of LogType:prelaunch.err

解决方案:

1)在 conf/spark-defaults.conf 中添加:

spark.driver.extraJavaOptions -Dhdp.version=2.6.5.0-292

spark.yarn.am.extraJavaOptions -Dhdp.version=2.6.5.0-2922)在HADOOP_CONF_DIR下面的mapred-site.xml 文件夹中使用当前版本替换hdp.version信息,使用sid批量替换:

sed -i 's/${hdp.version}/2.6.5.0-292/g' mapred-site.xml