神经网络中的Batch Normalization

1. 什么是Batch Normalization?

Batch Normalization(批标准化):它的功能是使得输入的X数据符合同一分布,从而使得训练更加简单、快速。一般来讲,Batch Normalization会放在卷积层后面,即卷积 + BN层 + 激活函数。

图解为什么需要batch Normalization

神经网络输入层:

隐藏层:

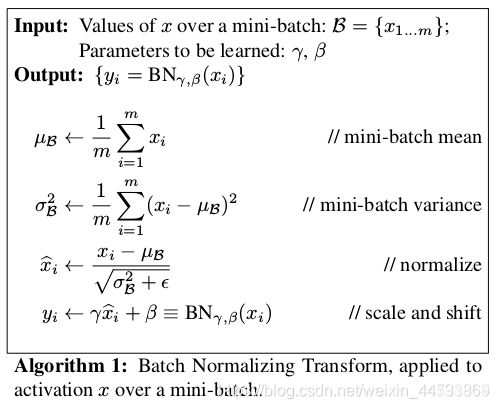

2.Batch Normalization

- 1、对输入进来的数据X进行均值求取。

- 2、利用输入进来的数据X减去第一步得到的均值,然后求平方和,获得输入X的方差。

- 3、利用输入X、第一步获得的均值和第二步获得的方差对数据进行归一化,即利用X减去均值,然后除上方差开根号。方差开根号前需要添加上一个极小值,防止分母为零的情况。

- 4、引入γ和β变量,对输入进来的数据进行缩放和平移。利用γ和β两个参数,让我们的网络可以学习恢复出原始网络所要学习的特征分布。

Bn层的好处

- 1、加速网络的收敛速度。在神经网络中,存在内部协变量偏移的现象,如果每层的数据分布不同的话,会导致非常难收敛,如果把每层的数据都在转换在均值为零,方差为1的状态下,这样每层数据的分布都是一样的,训练会比较容易收敛。

- 2、防止梯度爆炸和梯度消失。对于梯度消失而言,以Sigmoid函数为例,它会使得输出在[0,1]之间,实际上当x到了一定的大小,sigmoid激活函数的梯度值就变得非常小,不易训练。归一化数据的话,就能让梯度维持在比较大的值和变化率;对于梯度爆炸而言,在方向传播的过程中,每一层的梯度都是由上一层的梯度乘以本层的数据得到。如果归一化的话,数据均值都在0附近,很显然,每一层的梯度不会产生爆炸的情况。

- 3、防止过拟合。在网络的训练中,Bn使得一个minibatch中所有样本都被关联在了一起,因此网络不会从某一个训练样本中生成确定的结果,这样就会使得整个网络不会朝这一个方向使劲学习。一定程度上避免了过拟合。

γ和β变量的作用

Bn层在进行前三步后,会引入γ和β变量,对输入进来的数据进行缩放和平移。

γ和β变量是网络参数,是可学习的。

引入γ和β变量进行缩放平移可以使得神经网络有自适应的能力,在标准化效果好时,尽量不抵消标准化的作用,而在标准化效果不好时,尽量去抵消一部分标准化的效果,相当于让神经网络学会要不要标准化,如何折中选择。

Batch Normalization使用pytoch实现代码

def batch_norm(is_training, x, gamma, beta, moving_mean, moving_var, eps=1e-5, momentum=0.9):

if not is_training:

x_hat = (x - moving_mean) / torch.sqrt(moving_var + eps)

else:

mean = x.mean(dim=0, keepdim=True).mean(dim=2, keepdim=True).mean(dim=3, keepdim=True)

var = ((x - mean) ** 2).mean(dim=0, keepdim=True).mean(dim=2, keepdim=True).mean(dim=3, keepdim=True)

x_hat = (x - mean) / torch.sqrt(var + eps)

moving_mean = momentum * moving_mean + (1.0 - momentum) * mean

moving_var = momentum * moving_var + (1.0 - momentum) * var

Y = gamma * x_hat + beta

return Y, moving_mean, moving_var

class BatchNorm2d(nn.Module):

def __init__(self, num_features):

super(BatchNorm2d, self).__init__()

shape = (1, num_features, 1, 1)

self.gamma = nn.Parameter(torch.ones(shape))

self.beta = nn.Parameter(torch.zeros(shape))

self.register_buffer('moving_mean', torch.zeros(shape))

self.register_buffer('moving_var', torch.ones(shape))

def forward(self, x):

if self.moving_mean.device != x.device:

self.moving_mean = self.moving_mean.to(x.device)

self.moving_var = self.moving_var.to(x.device)

y, self.moving_mean, self.moving_var = batch_norm(self.training,

x, self.gamma, self.beta, self.moving_mean,

self.moving_var, eps=1e-5, momentum=0.9)

return y

参考文章

https://blog.csdn.net/weixin_44791964/article/details/114998793?spm=1001.2014.3001.5501