实验三:MapReduce初级编程实践

一、实验目的

- 通过实验掌握基本的MapReduce编程方法;

- 掌握用MapReduce解决一些常见的数据处理问题,包括数据去重、数据排序和数据挖掘等。

二、实验平台

- 操作系统:Kubuntu

- Hadoop版本:3.2.2

三、实验步骤

(一)编程实现文件合并和去重操作

对于两个输入文件,即文件A和文件B,请编写MapReduce程序,对两个文件进行合并,并剔除其中重复的内容,得到一个新的输出文件C。下面是输入文件和输出文件的一个样例供参考。

输入文件A的样例如下:

20170101 x

20170102 y

20170103 x

20170104 y

20170105 z

20170106 x

输入文件B的样例如下:

20170101 y

20170102 y

20170103 x

20170104 z

20170105 y

根据输入文件A和B合并得到的输出文件C的样例如下:

20170101 x

20170101 y

20170102 y

20170103 x

20170104 y

20170104 z

20170105 y

20170105 z

20170106 x

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

public class DuplicateRemoval {

public static class TokenizerMapper extends Mapper<Object, Text, Text, Text>

{

public void map(Object key, Text value, Context context) throws IOException, InterruptedException{

context.write(value, new Text(""));

}

}

public static class Reduce extends Reducer<Object, Text, Text, Text> {

public void reduce(Text key, Iterable<Text> values, Context context)throws IOException, InterruptedException {

context.write(key, new Text(""));

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

FileSystem fs = FileSystem.get(conf);

Job job = Job.getInstance(conf, "merge and duplicate removal");

job.setJarByClass(DuplicateRemoval.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(Reduce.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/output1/fileA"));

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/output1/fileB"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://localhost:9000/output2"));

System.exit(job.waitForCompletion(true)?0:1);

Path filepath = new Path("/output2/part-r-00000");

FSDataInputStream inputStream = fs.open(filepath);

BufferedReader bf = new BufferedReader(new InputStreamReader(inputStream));

String line;

while ((line = bf.readLine()) != null) {

System.out.println(line);

}

fs.close();

}

}

(二)编写程序实现对输入文件的排序

现在有多个输入文件,每个文件中的每行内容均为一个整数。要求读取所有文件中的整数,进行升序排序后,输出到一个新的文件中,输出的数据格式为每行两个整数,第一个数字为第二个整数的排序位次,第二个整数为原待排列的整数。下面是输入文件和输出文件的一个样例供参考。

输入文件1的样例如下:

33

37

12

40

输入文件2的样例如下:

4

16

39

5

输入文件3的样例如下:

1

45

25

根据输入文件1、2和3得到的输出文件如下:

1 1

2 4

3 5

4 12

5 16

6 25

7 33

8 37

9 39

10 40

11 45

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Partitioner;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

public class Merge {

public static class TokenizerMapper extends Mapper<Object, Text, IntWritable, IntWritable>

{

public static IntWritable data = new IntWritable();

public void map(Object key, Text value, Context context) throws IOException, InterruptedException{

String line = value.toString();

data.set(Integer.parseInt(line));

context.write(data, new IntWritable(1));

}

}

public static class Reduce extends Reducer<Object, IntWritable, IntWritable, IntWritable> {

private static IntWritable lineNum = new IntWritable(1);

public void reduce(IntWritable key, Iterable<IntWritable> values, Context context)throws IOException, InterruptedException {

for (IntWritable num : values){

context.write(lineNum, key);

lineNum = new IntWritable(lineNum.get()+1);

}

}

}

//自定义Partition函数,此函数根据输入数据的最大值和MapReduce框架中Partition的数量获取将输入数据按照大小分块的边界,然后根据输入数值和边界的关系返回对应的PartitionID

public static class Partition extends Partitioner<IntWritable, IntWritable> {

public int getPartition(IntWritable key, IntWritable value, int num) {

int maxNumber = 65223;

int bound = maxNumber/num +1;

int keyNumber = key.get();

for (int i = 0; i < num; i++){

if (keyNumber < bound * i && keyNumber >= bound * (i-1))

return i - 1;

}

return -1;

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

FileSystem fs = FileSystem.get(conf);

Job job = Job.getInstance(conf, "merge and duplicate removal");

job.setJarByClass(Merge.class);

job.setMapperClass(TokenizerMapper.class);

job.setReducerClass(Reduce.class);

job.setPartitionerClass(Partition.class);

job.setOutputKeyClass(IntWritable.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/input3/file1"));

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/input3/file2"));

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/input3/file3"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://localhost:9000/output3"));

System.exit(job.waitForCompletion(true)?0:1);

}

}

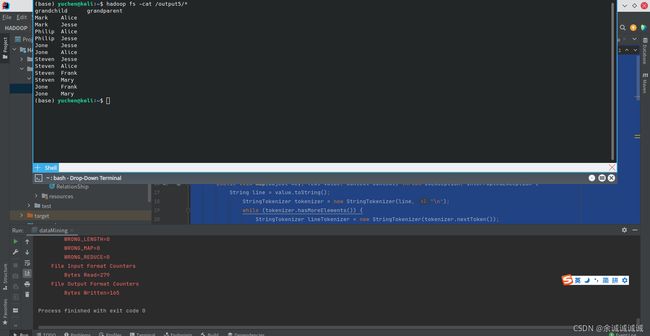

(三)对给定的表格进行信息挖掘

下面给出一个child-parent的表格,要求挖掘其中的父子辈关系,给出祖孙辈关系的表格。

输入文件内容如下:

child parent

Steven Lucy

Steven Jack

Jone Lucy

Jone Jack

Lucy Mary

Lucy Frank

Jack Alice

Jack Jesse

David Alice

David Jesse

Philip David

Philip Alma

Mark David

Mark Alma

输出文件内容如下:

grandchild grandparent

Steven Alice

Steven Jesse

Jone Alice

Jone Jesse

Steven Mary

Steven Frank

Jone Mary

Jone Frank

Philip Alice

Philip Jesse

Mark Alice

Mark Jesse

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import java.io.IOException;

import java.util.ArrayList;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class dataMining{

public static class TokenizerMapper extends Mapper<Object, Text, Text, Text>

{

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

StringTokenizer tokenizer = new StringTokenizer(line, "\n");

while (tokenizer.hasMoreElements()) {

StringTokenizer lineTokenizer = new StringTokenizer(tokenizer.nextToken());

String son = lineTokenizer.nextToken();

String parent = lineTokenizer.nextToken();

context.write(new Text(parent), new Text("-" + son));

context.write(new Text(son), new Text("+" + parent));

}

}

}

public static class Reduce extends Reducer<Text, Text, Text, Text> {

private static int lineNum = 0;

public void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

if (lineNum == 0) {

context.write(new Text("grandchild"), new Text("grandparent"));

++lineNum;

}

ArrayList<Text> grandChild = new ArrayList<Text>();

ArrayList<Text> grandParent = new ArrayList<Text>();

for (Text val : values) {

String s = val.toString();

System.out.println(s);

if (s.startsWith("-")) {

grandChild.add(new Text(s.substring(1)));

} else {

grandParent.add(new Text(s.substring(1)));

}

}

for (Text text1 : grandChild) {

for (Text text2 : grandParent) {

context.write(text1, text2);

}

}

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

Job job = Job.getInstance(conf, "data mining");

job.setJarByClass(dataMining.class);

job.setMapperClass(TokenizerMapper.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job, new Path("hdfs://localhost:9000/input5/file"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://localhost:9000/output5"));

System.exit(job.waitForCompletion(true)?0:1);

}

}

四、实验总结及问题

1、学会使用什么做什么事情;

学会基本mapreduce编程

2、在实验过程中遇到了什么问题?是如何解决的?

暂无

注意:

1、请大家上传实验结果到“Hadoop实验报告”文件夹;

2、直接发送Word文档,不要使用压缩文件;

3、文件名为:学号-姓名-实验几