二进制部署k8s高可用集群

Kubernetes 部署高可用集群

集群环境准备

主机规划

| 主机IP地址 | 主机名 | 配置 | 角色 | 软件列表 |

|---|---|---|---|---|

| 192.168.220.20 | master01 | 2C2G | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubelet、kube-proxy、Containerd、runc |

| 192.168.220.21 | master02 | 2C2G | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubelet、kube-proxy、Containerd、runc |

| 192.168.220.22 | node01 | 2C2G | worker | kubelet、kube-proxy、Containerd、runc |

| 192.168.220.20 | master01 | 2C2G | LB | haproxy、keepalived |

| 192.168.220.21 | master02 | 2C2G | LB | haproxy、keepalived |

| 192.168.220.100 | / | / | VIP |

软件版本

| 软件名称 | 版本 | 备注 |

|---|---|---|

| CentOS7 | kernel版本:5.17 | |

| kubernetes | v1.21.10 | |

| etcd | v3.5.2 | 最新版本 |

| calico | v3.19.4 | |

| coredns | v1.8.4 | |

| containerd | 1.6.1 | |

| runc | 1.1.0 | |

| haproxy | 5.18 | YUM源默认 |

| keepalived | 3.5 | YUM源默认 |

网络分配

| 网络名称 | 网段 | 备注 |

|---|---|---|

| Node网络 | 192.168.10.0/24 | |

| Service网络 | 10.96.0.0/16 | |

| Pod网络 | 10.244.0.0/16 |

集群部署

主机准备

主机名设置

# 主机名按照规划中进行设置,例:

[root@base_01 ~]# hostnamectl set-hostname master01

配置 hosts 文件

cat >> /etc/hosts << EOF

192.168.220.20 master01

192.168.220.21 master02

192.168.220.22 node01

EOF

关闭防火墙

[root@base_01 ~]# systemctl stop firewalld

[root@base_01 ~]# systemctl disable firewalld

[root@base_01 ~]# firewall-cmd --state

关闭 selinux

# 暂时关闭 SELINUX

[root@base_01 ~]# setenforce 0

# 修改 /etc/selinux/config 文件,将状态改为关闭,重启后生效

[root@base_01 ~]# sed -ri 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

# 查看 SELINUX 状态

[root@base_01 ~]# sestatus

关闭 swap 交换分区

# 临时关闭 swap 交换分区

[root@base_01 ~]# swapoff -a

# 修改 /etc/fstab 文件,关闭交换分区,重启后生效

[root@base_01 ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

# 添加系统内核参数 vm.swappiness=0 关闭交换分区并使其生效,不需要重启系统

[root@base_01 ~]# echo "vm.swappiness=0" >> /etc/sysctl.conf

[root@base_01 ~]# sysctl -p

主机时间同步

# 下载 ntpdate 时间同步包

[root@base_01 ~]# yum -y install ntpdate

# 制定时间同步计划任务

[root@base_01 ~]# crontab -e

0 */1 * * * ntpdate time1.aliyun.com

limit 优化

# 临时限制

[root@base_01 ~]# ulimit -SHn 65535

# 写入到文件

[root@base_01 ~]# cat <> /etc/security/limits.conf

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

ipvs 管理工具安装及模块加载

为集群节点安装,负载均衡节点不用安装

[root@base_01 ~]# yum -y install ipvsadm ipset sysstat conntrack libseccomp

# 临时加载模块,所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可:

[root@base_01 ~]# modprobe -- ip_vs

[root@base_01 ~]# modprobe -- ip_vs_rr

[root@base_01 ~]# modprobe -- ip_vs_wrr

[root@base_01 ~]# modprobe -- ip_vs_sh

[root@base_01 ~]# modprobe -- nf_conntrack

# 创建 /etc/modules-load.d/ipvs.conf 并加入以下内容

[root@base_01 ~]# cat >/etc/modules-load.d/ipvs.conf <

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

加载 containerd 相关内核模块

# 临时加载模块

[root@base_01 ~]# modprobe overlay

[root@base_01 ~]# modprobe br_netfilter

# 永久性加载模块

[root@base_01 ~]# cat > /etc/modules-load.d/containerd.conf << EOF

overlay

br_netfilter

EOF

# 设置加载模块开机启动

[root@base_01 ~]# systemctl enable --now systemd-modules-load.service

Linux 内核升级

在所有节点中安装,需要操作系统更换内核

# 安装 perl

[root@base_01 ~]# yum -y install perl

# 安装 GPG-KEY

[root@base_01 ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

# 安装 elpo yum 源

[root@base_01 ~]# yum -y install https://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

# 安装内核

[root@base_01 ~]# yum --enablerepo="elrepo-kernel" -y install kernel-ml.x86_64

# 修改引导菜单

[root@base_01 ~]# grub2-set-default 0

[root@base_01 ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

修改内核参数

# 优化内核

[root@base_01 ~]# cat < /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 131072

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

# 使配置生效

[root@base_01 ~]# sysctl --system

# 所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

[root@base_01 ~]# reboot -h now

# 重启后查看ipvs模块加载情况:

[root@master01 ~]# lsmod | grep --color=auto -e ip_vs -e nf_conntrack

# 重启后查看containerd相关模块加载情况:

[root@master01 ~]# lsmod | egrep 'br_netfilter | overlay'

负载均衡器准备

安装haproxy和keeplievd

[root@master01 ~]# yum -y install haproxy keepalived

HaProxy 配置

cat >/etc/haproxy/haproxy.cfg<<"EOF"

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend k8s-master

bind 0.0.0.0:6535

bind 127.0.0.1:6535

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server master01 192.168.220.20:6443 check

server master02 192.168.220.21:6443 check

EOF

Keepalived 配置

主从配置不一致,需要注意

# 主:

cat >/etc/keepalived/keepalived.conf<<"EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface ens32

mcast_src_ip 192.168.220.20

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.220.100

}

track_script {

chk_apiserver

}

}

EOF

# 从:

cat >/etc/keepalived/keepalived.conf<<"EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens32

mcast_src_ip 192.168.220.21

virtual_router_id 51

priority 99

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.220.100

}

track_script {

chk_apiserver

}

}

EOF

健康检查脚本

cat > /etc/keepalived/check_apiserver.sh <<"EOF"

#!/bin/bash

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

chmod +x /etc/keepalived/check_apiserver.sh

启动服务并验证

# 启动服务

[root@master01 ~]# systemctl enable --now haproxy keepalived

# 验证

[root@master01 ~]# ip a s

配置免密登录

在 master01 上操作

# 生成密钥文件

[root@master01 ~]# ssh-keygen

# 传输密钥对

[root@master01 ~]# ssh-copy-id master01

[root@master01 ~]# ssh-copy-id master02

[root@master01 ~]# ssh-copy-id worker1

部署 etcd 集群

以下为准备工作,在master01上操作即可

创建工作目录

[root@master01 ~]# mkdir /opt/packages

# 获取 cfssl 工具

[root@master01 ~]# cd /opt/packages/

[root@master01 packages]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@master01 packages]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@master01 packages]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

# cfssl是使用go编写,由CloudFlare开源的一款PKI/TLS工具。主要程序有:

# - cfssl,是CFSSL的命令行工具

# - cfssljson用来从cfssl程序获取JSON输出,并将证书,密钥,CSR和bundle写入文件中

# 赋予执行权限

[root@master01 packages]# chmod +x cf*

# 将 cfssl 工具 cp 至 /usr/local/bin 下

[root@master01 packages]# cp ./cfssl_linux-amd64 /usr/local/bin/cfssl

[root@master01 packages]# cp ./cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@master01 packages]# cp ./cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

# 查看 cfssl 版本

[root@master01 packages]# cfssl version

创建CA证书

# 配置 ca 证书请求文件

[root@master01 cert]# cat > ca-csr.json <<"EOF"

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

],

"ca": {

"expiry": "87600h"

}

}

EOF

# 创建 ca 证书

[root@master01 cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

[root@master01 cert]# ls

ca.csr ca-csr.json ca-key.pem ca.pem

# 配置 ca 证书策略

# cfssl print-defaults config > ca-config.json 生成默认策略

[root@master01 cert]# cat > ca-config.json <<"EOF"

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

# server auth 表示client可以对使用该ca对server提供的证书进行验证

# client auth 表示server可以使用该ca对client提供的证书进行验证

创建 etcd 证书

# 配置 ectd 请求文件

[root@master01 cert]# cat > etcd-csr.json <<"EOF"

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.220.20",

"192.168.220.21"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}]

}

EOF

# 生成 etcd 证书

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

在所有主节点进行配置

etcd 下载

etcd 软件包也可在 github 下载

# 下载 etcd 软件包

[root@master01 packages]# wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz

# 解压 etcd 软件包,并将 etcd 可执行文件 cp 至 /usr/local/bin 下

[root@master01 softwares]# tar xf etcd-v3.5.2-linux-amd64.tar.gz

[root@master01 softwares]# cp ./etcd-v3.5.2-linux-amd64/etcd* /usr/local/bin/

# 查看当前 etcd 版本

[root@master01 softwares]# etcdctl version

etcdctl version: 3.5.2

API version: 3.5

# 将 etcd 可执行文件 分发至其他 master 服务器

[root@master01 softwares]# scp ./etcd-v3.5.2-linux-amd64/etcd* root@master02:/usr/local/bin/

配置 etcd

# 创建 etcd 配置目录,以及数据目录

[root@master01 ~]# mkdir /etc/etcd

[root@master01 ~]# mkdir -p /opt/data/etcd/default.etcd

# master01 配置文件

[root@master01 ~]# cat > /etc/etcd/etcd.conf <<"EOF"

# 成员信息

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/opt/data/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.220.20:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.220.20:2379,http://127.0.0.1:2379"

# 集群信息

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.220.20:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.220.20:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.220.20:2380,etcd2=https://192.168.220.21:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

# master02 配置文件

[root@master02 ~]# cat > /etc/etcd/etcd.conf <<"EOF"

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/opt/data/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.220.21:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.220.21:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.220.21:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.220.21:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.220.20:2380,etcd2=https://192.168.220.21:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

# 配置文件说明

# ETCD_NAME:节点名称,集群中唯一

# ETCD_DATA_DIR:数据目录

# ETCD_LISTEN_PEER_URLS:集群通信监听地址

# ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

# ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

# ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

# ETCD_INITIAL_CLUSTER:集群节点地址

# ETCD_INITIAL_CLUSTER_TOKEN:集群Token

# ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

创建服务配置文件

# 创建 etcd 证书存放目录

[root@master01 ~]# mkdir -p /etc/etcd/ssl

# cp 证书到指定目录

[root@master01 cert]# cp ./ca*.pem /etc/etcd/ssl/

[root@master01 cert]# cp ./etcd*.pem /etc/etcd/ssl/

# 将证书同步至其他 master 节点

[root@master01 ~]# scp -r /etc/etcd/ssl/ master02:/etc/etcd/ssl/

# 编写服务启动文件

[root@master01 ~]# cat > /usr/lib/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf # 指定配置文件位置

WorkingDirectory=/opt/data/etcd/ # 指定工作目录

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \ # 指定 etcd 证书位置

--key-file=/etc/etcd/ssl/etcd-key.pem \ # 指定 etcd 证书 key 位置

--trusted-ca-file=/etc/etcd/ssl/ca.pem \ # 指定受信任 ca 证书位置

--peer-cert-file=/etc/etcd/ssl/etcd.pem \ # 指定成员 etcd 证书位置

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \ # 指定成员 etcd 证书 key 位置

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \ # 指定成员受信任 ca 证书位置

--peer-client-cert-auth \ # 成员客户端认证

--client-cert-auth # 客户端认证

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

启动 etcd 集群

# 启动 etcd 服务

[root@master01 ~]# systemctl daemon-reload

[root@master01 ~]# systemctl enable --now etcd.service

# 查看服务启动状态

[root@master01 ssl]# systemctl status etcd

# 验证集群状态

# 查看端点是否健康,ETCDCTL_API 指定api版本,也可不指定

[root@master01 ssl]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://192.168.220.20:2379,https://192.168.220.21:2379 endpoint health

# 查看 etcd 成员列表

[root@master01 ~]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://192.168.220.20:2379,https://192.168.220.21:2379 member list

+------------------+---------+-------+-----------------------------+-----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+-----------------------------+-----------------------------+------------+

| 32278b01af1acefd | started | etcd1 | https://192.168.220.20:2380 | https://192.168.220.20:2379 | false |

| 598347348868c61c | started | etcd2 | https://192.168.220.21:2380 | https://192.168.220.21:2379 | false |

+------------------+---------+-------+-----------------------------+-----------------------------+------------+

# 查看 etcd 集群信息

[root@master01 ~]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://192.168.220.20:2379,https://192.168.220.21:2379 endpoint status

+-----------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+-----------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://192.168.220.20:2379 | 32278b01af1acefd | 3.5.2 | 20 kB | true | false | 2 | 11 | 11 | |

| https://192.168.220.21:2379 | 598347348868c61c | 3.5.2 | 20 kB | false | false | 2 | 11 | 11 | |

+-----------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

Kubernetes 集群部署

kubernetes 软件包下载及安装

# 下载软件包,也可至官方网站进行下载

[root@master01 softwares]# wget https://dl.k8s.io/v1.21.10/kubernetes-server-linux-amd64.tar.gz

# 解压软件包

[root@master01 softwares]# tar -xf kubernetes-server-linux-amd64.tar.gz

# cp kube-apiserver kube-controller-manager kube-scheduler kubectl 到 master 节点

[root@master01 bin]# cp kube-apiserver kube-controller-manager kubectl kube-scheduler /usr/local/bin/

# cp kubelet kube-proxy 到 worker 节点,master 节点也可安装

[root@master01 bin]# scp kubelet kube-proxy node01:/usr/local/bin/

# 在所有集群节点创建目录

[root@master01 bin]# mkdir -p /etc/kubernetes/

[root@master01 bin]# mkdir -p /etc/kubernetes/ssl

[root@master01 bin]# mkdir -p /opt/log/kubernetes

部署 api-server

# 创建 apiserver 证书请求文件

[root@master01 cert]# cat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.220.20",

"192.168.220.21",

"192.168.220.22",

"192.168.220.100",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

# 说明:

# 如果 hosts 字段不为空则需要指定授权使用该证书的 IP(含VIP) 或域名列表。由于该证书被 集群使# 用,需要将节点的IP都填上,为了方便后期扩容可以多写几个预留的IP。

# 同时还需要填写 service 网络的首个IP(一般是 kube-apiserver 指定的 service-cluster-ip- # range 网段的第一个IP,如 10.96.0.1)。

# 生成 apiserver 证书及 token文件

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

# 新建 token.csv 文件,用于后期工作节点动态颁发证书

[root@master01 cert]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

# 说明:

# 创建TLS机制所需TOKEN

# TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和kube-proxy与

# kube-apiserver进行通信,必须使用CA签发的有效证书才可以,当Node节点很多时,这种客户端证书颁# 发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes引入了TLS

# bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,# kubelet的证书由apiserver动态签署。所以强烈建议在Node上使用这种方式,目前主要用于

# kubelet,kube-proxy还是由我们统一颁发一个证书。

创建 apiserver 服务配置文件

# master01 配置文件

[root@master01 ~]# cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=192.168.220.20 \ # 绑定地址

--secure-port=6443 \ # 安全端口

--advertise-address=192.168.220.20 \ # 通告地址

--insecure-port=0 \ # 非安全端口是否开启,0表示禁用,若需要开启将0改为8080即可

--authorization-mode=Node,RBAC \ # 认证模式

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \ # service客户端的范围

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \ # service 开放端口的范围

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://192.168.220.20:2379,https://192.168.220.21:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/log/api-server/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/api-server \

--v=4"

EOF

# master02 服务配置文件

[root@master02 ~]# cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=192.168.220.21 \

--secure-port=6443 \

--advertise-address=192.168.220.21 \

--insecure-port=0 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://192.168.220.20:2379,https://192.168.220.21:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/log/api-server/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/api-server \

--v=4"

EOF

创建 apiserver 服务管理配置文件

[root@master01 ~]# cat > /usr/lib/systemd/system/kube-apiserver.service << "EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

# 同步文件到集群 master 节点

[root@master01 cert]# cp ca*.pem /etc/kubernetes/ssl/

[root@master01 cert]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@master01 cert]# cp token.csv /etc/kubernetes/

[root@master01 cert]# scp token.csv ca*.pem kube-apiserver*.pem master02:/etc/kubernetes/ssl/

启动 apiserver 服务

[root@master01 cert]# systemctl daemon-reload

[root@master01 cert]# systemctl enable --now kube-apiserver

# 测试

[root@master01 cert]# curl --insecure https://192.168.220.20:6535

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

[root@master01 cert]# curl --insecure https://192.168.220.21:6535

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

[root@master01 cert]# curl --insecure https://192.168.220.100:6535

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {

},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

部署 kubectl

创建 kubectl 证书请求文件

[root@master01 cert]# cat > admin-csr.json << "EOF"

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

EOF

# 说明:

# 后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权;

# kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用kube-apiserver 的所有

# API的权限;

# O指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限;

# 注:

# 这个admin 证书,是将来生成管理员用的kubeconfig 配置文件用的,现在我们一般建议使用RBAC 来对# kubernetes 进行角色权限控制, kubernetes 将证书中的CN 字段 作为User, O 字段作Group;

# "O": "system:masters", 必须是system:masters,否则后面kubectl create clusterrolebinding报错。

# 生成证书文件

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

# 复制文件到指定目录

[root@master01 cert]# cp admin*pem /etc/kubernetes/ssl/

生成 kubeconfig 配置文件

kube.config 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书

# 配置管理的集群以及证书和证书访问链接

[root@master01 ssl]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.220.100:6535 --kubeconfig=/root/.kube/kube.config

# 配置证书角色 admin

[root@master01 ssl]# kubectl config set-credentials admin --client-certificate=/etc/kubernetes/ssl/admin.pem --client-key=/etc/kubernetes/ssl/admin-key.pem --embed-certs=true --kubeconfig=/root/.kube/kube.config

# 设置安全上下文

[root@master01 ssl]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=/root/.kube/kube.config

# 使用安全上下文进行管理

[root@master01 ssl]# kubectl config use-context kubernetes --kubeconfig=/root/.kube/kube.config

进行角色绑定

[root@master01 ssl]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/kube.config

查看集群状态

# 为配置文件配置环境变量,最好将其书写进 .bashrc、.bash_profile 或 /etc/profile 文件中

[root@master01 ~]# export KUBECONFIG=$HOME/.kube/kube.config

# 查看集群信息

[root@master01 ~]# kubectl cluster-info

Kubernetes control plane is running at https://192.168.220.100:6535

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

# 查看集群组件状态

[root@master01 ~]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

# 由于controller-manager和scheduler还未安装配置,则状态为Unhealthy

# 查看命名空间中资源对象

[root@master01 ~]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.96.0.1 443/TCP 25h

同步 kubectl 配置文件到集群其他 master 节点

# 同步证书文件

[root@master01 ~]# scp /etc/kubernetes/ssl/admin* master02:/etc/kubernetes/ssl/

# 同步配置文件

[root@master01 ~]# scp -r /root/.kube/ master02:/root/

# 集群节点验证

[root@master02 ~]# export KUBECONFIG=$HOME/.kube/kube.config

[root@master02 ~]# kubectl cluster-info

Kubernetes control plane is running at https://192.168.220.100:6535

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@master02 ~]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

[root@master02 ~]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.96.0.1 443/TCP 25h

配置 kubectl 命令补全

[root@master02 ~]# yum install -y bash-completion

[root@master02 ~]# source /usr/share/bash-completion/bash_completion

[root@master02 ~]# source <(kubectl completion bash)

[root@master02 ~]# kubectl completion bash > ~/.kube/completion.bash.inc

[root@master02 ~]# source '/root/.kube/completion.bash.inc'

[root@master02 ~]# source $HOME/.bash_profile

部署 kube-controller-manager

创建 kube-controller-manager 证书请求文件并完成集群配置

[root@master01 cert]# cat > kube-controller-manager-csr.json << "EOF"

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.220.20",

"192.168.220.21",

"192.168.220.22"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

EOF

# 说明:

# hosts 列表包含所有 kube-controller-manager 节点 IP;

# CN 为 system:kube-controller-manager;

# O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings

# system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

# 创建 kube-controller-manager 证书文件

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

# 创建 kube-controll-manager 证书存放目录,并将证书 cp 至改目录

[root@master01 cert]# cp kube-controller-manager-key.pem kube-controller-manager.pem /etc/kubernetes/ssl/

# 创建 kube-controller-manager 的 kube-controller-manager.kubeconfig 并进行集群配置

# 配置管理的集群以及证书和证书访问链接

[root@master01 cert]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.220.100:6535 --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig

# 设置集群需要的证书

[root@master01 cert]# kubectl config set-credentials system:kube-controller-manager --client-certificate=/etc/kubernetes/ssl/kube-controller-manager.pem --client-key=/etc/kubernetes/ssl/kube-controller-manager-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig

# 设置集群访问的安全上下文

[root@master01 cert]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig

# 使用设置的安全上下文

[root@master01 cert]# kubectl config use-context system:kube-controller-manager --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig

创建 kube-controller-manager 配置文件

# 创建 kube-controller-manager 日志目录

[root@master01 ~]# mkdir /opt/logs/control-manager

# 创建 kube-controller-manager 配置文件

[root@master01 cert]# cat > kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS="--port=10252 \ # controller-manager 监听端口

--secure-port=10257 \ # controller-manager 安全端口

--bind-address=127.0.0.1 \ # 绑定本地IP

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \ # 引用kube-controller-manager.kubeconfig 文件

--service-cluster-ip-range=10.96.0.0/16 \ # service IP 范围

--cluster-name=kubernetes \ # 集群名称

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \ # 配置集群 ca 证书

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \ # 引用集群 ca 证书的key

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \ # 配置 pod IP 范围

--experimental-cluster-signing-duration=87600h \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-use-rest-clients=true \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/control-manager \

--v=2"

EOF

创建服务启动文件

# cp 配置文件到指定目录

[root@master01 cert]# cp kube-controller-manager.conf /etc/kubernetes/

# 配置服务启动文件

[root@master01 cert]# cat > /usr/lib/systemd/system/kube-controller-manager.service << "EOF"

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

同步文件到集群 master 节点

# cp 证书文件以及 cont 文件到其他集群节点

[root@master01 kubernetes]# scp kube-controller-manager.* master02:/etc/kubernetes/

[root@master01 ssl]# scp kube-controller-manager* master02:/etc/kubernetes/ssl/

[root@master01 system]# scp kube-controller-manager.service master02:/usr/lib/systemd/system

启动服务

# 重新加载配置文件

[root@master01 system]# systemctl daemon-reload

# 设置 kube-controller-manager 服务开机自启

[root@master01 system]# systemctl enable --now kube-controller-manager

# 查看服务状态

[root@master01 system]# systemctl status kube-controller-manager

# 验证

[root@master01 system]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Healthy ok

etcd-1 Healthy {"health":"true","reason":""}

etcd-0 Healthy {"health":"true","reason":""}

# 可以看到现在 controller 健康状态为正常,其余master节点均为此操作

部署 kube-scheduler

生成 kube-scheduler 证书请求文件

# 证书请求文件

[root@master01 cert]# cat > kube-scheduler-csr.json << "EOF"

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.220.20",

"192.168.220.21"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

EOF

# 生成 kube-scheduler 证书

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

# 将生成的 kube-scheduler 证书文件 cp 到 /etc/kubenetes/ssl 目录

[root@master01 cert]# cp kube-scheduler.pem kube-scheduler-key.pem /etc/kubernetes/ssl/

**创建 kube-scheduler 的 kubeconfig **

# 配置管理的集群以及证书和证书访问链接

[root@master01 ssl]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.220.100:6535 --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig

# 设置集群需要的证书

[root@master01 kubernetes]# kubectl config set-credentials system:kube-scheduler --client-certificate=/etc/kubernetes/ssl/kube-scheduler.pem --client-key=/etc/kubernetes/ssl/kube-scheduler-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig

# 设置集群访问的安全上下文

[root@master01 kubernetes]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig

# 使用设置的安全上下文

[root@master01 kubernetes]# kubectl config use-context system:kube-scheduler --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig

创建服务配置文件以及服务启动文件

# 创建 kube-scheduler 日志目录

[root@master01 ~]# mkdir /opt/logs/scheduler

# 创建服务配置文件

[root@master01 kubernetes]# cat > /etc/kubernetes/kube-scheduler.conf << "EOF"

KUBE_SCHEDULER_OPTS="--address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/logs/scheduler \

--v=2"

EOF

# 创建服务启动文件

[root@master01 kubernetes]# cat > /usr/lib/systemd/system/kube-scheduler.service << "EOF"

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

同步文件至集群 master 节点

# 同步 ssl 证书

[root@master01 ~]# scp /etc/kubernetes/ssl/kube-scheduler* master02:/etc/kubernetes/ssl/

# 同步配置文件

[root@master01 ~]# scp /etc/kubernetes/kube-scheduler.* master02:/etc/kubernetes/

# 同步服务启动文件

[root@master01 ~]# scp /usr/lib/systemd/system/kube-scheduler.service master02:/usr/lib/systemd/system/

启动 scheduler 服务并验证

# 重新加载配置文件

[root@master01 ~]# systemctl daemon-reload

# 启动 scheduler 服务

[root@master01 ~]# systemctl enable --now kube-scheduler

# 查看服务状态

[root@master01 ~]# systemctl status kube-scheduler

# 查看组件状态

[root@master01 ~]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

# master 所需要的四个节点均正常

Containerd 安装及配置

获取软件包

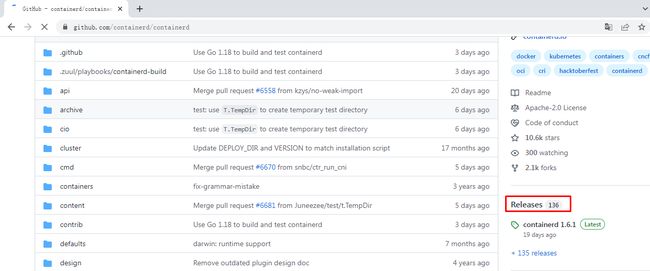

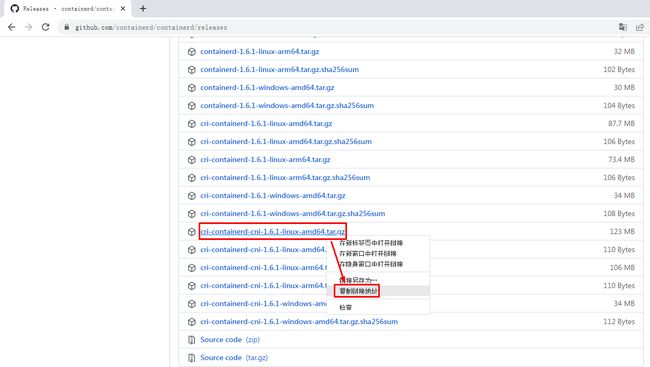

可以上到 githup 官网上进行下载

或直接使用 wget 下载到指定服务器

[root@worker1 ~]# wget https://github.com/containerd/containerd/releases/download/v1.6.1/cri-containerd-cni-1.6.1-linux-amd64.tar.gz

# 解压containerd软件包到 /下

[root@worker1 ~]# tar -xf cri-containerd-cni-1.6.1-linux-amd64.tar.gz -C /

# 默认解压后会有如下目录:

# etc

# opt

# usr

# 直接解压到 / 下这样就省去复制文件步骤

生成 containerd 配置文件并修改

# 创建配置文件目录

[root@worker1 ~]# mkdir /etc/containerd

# 生成默认配置模板

[root@worker1 ~]# containerd config default >/etc/containerd/config.toml

# 修改配置文件

[root@worker1 ~]# cat >/etc/containerd/config.toml<

root = "/var/lib/containerd" # 设置工作目录

state = "/run/containerd" # 设置状态存放目录

oom_score = -999

[grpc]

address = "/run/containerd/containerd.sock" # 设置 sock 文件存放位置

uid = 0

gid = 0

max_recv_message_size = 16777216

max_send_message_size = 16777216

[debug]

address = ""

uid = 0

gid = 0

level = ""

[metrics]

address = ""

grpc_histogram = false

[cgroup]

path = ""

[plugins]

[plugins.cgroups]

no_prometheus = false

[plugins.cri]

stream_server_address = "127.0.0.1"

stream_server_port = "0"

enable_selinux = false

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6" # 设置黑盒镜像

stats_collect_period = 10

systemd_cgroup = true # 设置cgroup启动方式

enable_tls_streaming = false

max_container_log_line_size = 16384

[plugins.cri.containerd]

snapshotter = "overlayfs"

no_pivot = false

[plugins.cri.containerd.default_runtime]

runtime_type = "io.containerd.runtime.v1.linux"

runtime_engine = ""

runtime_root = ""

[plugins.cri.containerd.untrusted_workload_runtime]

runtime_type = ""

runtime_engine = ""

runtime_root = ""

[plugins.cri.cni]

bin_dir = "/opt/cni/bin"

conf_dir = "/etc/cni/net.d"

conf_template = "/etc/cni/net.d/10-default.conf"

[plugins.cri.registry]

[plugins.cri.registry.mirrors]

[plugins.cri.registry.mirrors."docker.io"]

endpoint = [

"https://docker.mirrors.ustc.edu.cn",

"http://hub-mirror.c.163.com"

]

[plugins.cri.registry.mirrors."gcr.io"]

endpoint = [

"https://gcr.mirrors.ustc.edu.cn"

]

[plugins.cri.registry.mirrors."k8s.gcr.io"]

endpoint = [

"https://gcr.mirrors.ustc.edu.cn/google-containers/"

]

[plugins.cri.registry.mirrors."quay.io"]

endpoint = [

"https://quay.mirrors.ustc.edu.cn"

]

[plugins.cri.registry.mirrors."harbor.kubemsb.com"]

endpoint = [

"http://harbor.kubemsb.com"

]

[plugins.cri.x509_key_pair_streaming]

tls_cert_file = ""

tls_key_file = ""

[plugins.diff-service]

default = ["walking"]

[plugins.linux]

shim = "containerd-shim"

runtime = "runc"

runtime_root = ""

no_shim = false

shim_debug = false

[plugins.opt]

path = "/opt/containerd"

[plugins.restart]

interval = "10s"

[plugins.scheduler]

pause_threshold = 0.02

deletion_threshold = 0

mutation_threshold = 100

schedule_delay = "0s"

startup_delay = "100ms"

EOF

安装 runc

由于上述软件包中包含的runc对系统依赖过多,所以建议单独下载安装。

默认runc执行时提示:runc: symbol lookup error: runc: undefined symbol: seccomp_notify_respond

使用 github 下载

# 直接使用 wget 下载

[root@worker1 ~]# wget https://github.com/opencontainers/runc/releases/download/v1.1.0/runc.amd64

# 下载之后赋予 runc 执行权限,并替换掉源软件包中的 runc

[root@worker1 ~]# chmod +x runc.amd64

[root@worker1 ~]# mv runc.amd64 /usr/local/sbin/runc

# 查看当前 runc 的版本

[root@worker1 ~]# runc -v

runc version 1.1.0

commit: v1.1.0-0-g067aaf85

spec: 1.0.2-dev

go: go1.17.6

libseccomp: 2.5.3

启动 containerd 服务

# 启动服务并设置为开机自启

[root@worker1 ~]# systemctl enable --now containerd

# 查看服务状态

[root@worker1 ~]# systemctl status --now containerd

注意:docker容器管理 方式以及 containerd 管理方式选择其中一种即可

Docker 安装及配置

官网下载 docker-compose: https://github.com/docker/compose/releases

官网限制 docker: https://download.docker.com/linux/static/stable/x86_64 可以使用 yum 安装

yum -y install docker-ce-19.03.*

部署安装docker

# 解压 docker 压缩包,可以去官网下载最新的 docker

[root@localhost ~]# tar xf docker-18.06.3-ce.tgz

# cp docker目录下的二进制文件到 /usr/local/bin

[root@localhost ~]# cp docker/* /usr/local/bin/

# cp docker-compose 命令到 /usr/local/bin

[root@localhost ~]# chmod +x docker-compose-linux-x86_64 && cp docker-compose-linux-x86_64 /usr/local/bin/docker-compose

# 配置 docker service 文件

[root@localhost ~]# cat > /usr/lib/systemd/system/docker.service <

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/local/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=0

RestartSec=2

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

Delegate=yes

KillMode=process

OOMScoreAdjust=-500

[Install]

WantedBy=multi-user.target

EOF

# 配置 docker daemon.json 文件

[root@localhost ~]# cat >/etc/docker/daemon.json <

{

"registry-mirrors": [

"https://651cp807.mirror.aliyuncs.com",

"https://docker.mirrors.ustc.edu.cn",

"http://hub-mirror.c.163.com"

],

"insecure-registries": ["https://harbor.images.io:9181"], # 私有仓库地址,这里需要跟harbor保持一致

"exec-opts": ["native.cgroupdriver=systemd"],

"max-concurrent-downloads": 10,

"log-driver": "json-file",

"log-level": "warn",

"log-opts": {

"max-size": "10m",

"max-file": "3"

},

"data-root": "/opt/data/docker"

}

EOF

# 启动docker服务

[root@localhost ~]# systemctl daemon-reload && systemctl enable --now docker

# 查看 docker 版本信息

[root@localhost ~]# docker version

Client:

Version: 18.06.3-ce

API version: 1.38

Go version: go1.10.4

Git commit: d7080c1

Built: Wed Feb 20 02:24:22 2019

OS/Arch: linux/amd64

Experimental: false

Server:

Engine:

Version: 18.06.3-ce

API version: 1.38 (minimum version 1.12)

Go version: go1.10.3

Git commit: d7080c1

Built: Wed Feb 20 02:25:33 2019

OS/Arch: linux/amd64

Experimental: false

部署 kubelet

在 master01 上操作

创建 kubelet-bootstrap.kubeconfig

# 取出存放在 token.csv 中的 token

[root@master01 cert]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

# 配置管理的集群以及证书和证书访问链接

[root@master01 ssl]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.220.100:6535 --kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig

# 设置集群需要的证书

[root@master01 ~]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig

# 设置集群的安全上下文

[root@master01 ~]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig

# 设置使用集群的安全上下文

[root@master01 ~]# kubectl config use-context default --kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig

# 创建绑定角色 cluster-system-anonymous

[root@master01 ~]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrap

# 创建角色kubelet-bootstrap

[root@master01 ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap --kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig

# 验证

#

[root@master01 kubernetes]# kubectl describe clusterrolebinding cluster-system-anonymous

Name: cluster-system-anonymous

Labels:

Annotations:

Role:

Kind: ClusterRole

Name: cluster-admin

Subjects:

Kind Name Namespace

---- ---- ---------

User kubelet-bootstrap

[root@master01 kubernetes]# kubectl describe clusterrolebinding kubelet-bootstrap

Name: kubelet-bootstrap

Labels:

Annotations:

Role:

Kind: ClusterRole

Name: system:node-bootstrapper

Subjects:

Kind Name Namespace

---- ---- ---------

User kubelet-bootstrap

Containerd 方式

创建 kubelet 配置文件

[root@master01 ~]# cat > kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem" # 配置ca证书

},

"webhook": {

"enabled": true, # webhook 是否开启

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false # 匿名用户是否开启

}

},

"authorization": {

"mode": "Webhook", # 认证方式

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.220.20", # 当前主机IP

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

创建 kubelet 服务启动管理文件

[root@master01 kubelet]# cat > kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--cni-bin-dir=/opt/cni/bin \

--cni-conf-dir=/etc/cni/net.d \

--container-runtime=remote \

--container-runtime-endpoint=unix:///run/containerd/containerd.sock \

--network-plugin=cni \

--rotate-certificates \

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.2 \

--root-dir=/etc/cni/net.d \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/log/kubernetes/kubelet \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

同步配置文件到集群节点

# cp 文件到其他应用节点

[root@master01 ~]# for i in master02 node01;do

scp /etc/kubernetes/kubelet* $i:/etc/kubernetes

scp /etc/kubernetes/ssl/ca.pem $i:/etc/kubernetes/ssl

scp /usr/lib/systemd/system/kublet.service $i:/usr/lib/systemd/system/

done

# 需要注意的是 kubelet.json 中的 address 需要改为当前主机的IP地址

# 配置更改完成后创建logs目录并启动服务

[root@master01 ~]# mkdir /opt/log/kubernetes/kubelet

[root@master01 ~]# systemctl daemon-reload

[root@master01 ~]# systemctl enable --now kubelet

# 查看集群状态

[root@master01 kubernetes]# kubectl get node

NAME STATUS ROLES AGE VERSION

master01 Ready 29m v1.21.10

master02 Ready 29m v1.21.10

worker1 Ready 29m v1.21.10

Docker 方式

创建 kubelet 配置文件

# 对应节点的IP以及主机名需要按照实际情况来

[root@master01 ~]# cat > /etc/kubernetes/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/logs/kubelet \

--hostname-override=master01 \

--network-plugin=cni \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--config=/etc/kubernetes/kubelet-config.yml \

--cert-dir=/etc/kubernetes/ssl \

--pod-infra-container-image=harbor.images.io:9181/library/pause/pause:3.2"

EOF

# --hostname-override:显示名称,集群中唯一

# --network-plugin:启用CNI

# --kubeconfig:空路径,会自动生成,后面用于连接apiserver

# --bootstrap-kubeconfig:首次启动向apiserver申请证书

# --config:配置参数文件

# --cert-dir:kubelet证书生成目录

# --pod-infra-container-image:管理Pod网络容器的镜像

配置参数文件

[root@master01 ~]# cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.220.20

port: 10250

readOnlyPort: 10255

cgroupDriver: systemd

clusterDNS:

- 10.96.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

创建服务管理启动文件

[root@master01 ~]# cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

# 启动服务

[root@master01 ~]# systemctl daemon-reload && systemctl enabel --now kubelet

[root@master01 ~]# systemctl status kubelet

$KUBELET_OPTS ,前面加个转义,不加的话,他取变量KUBELET_OPTS 的值,为空。因此,为了保留这个$KUBELET_OPTS 在前面加了一个转义\

node节点状态

[root@master01 cert]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-DARl9unGiqaggF1Se3EXa9LjMgiGB-pMbFvgA4xHIxA 10m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

node-csr-YwyUtC-JMlbxToWTnonpvLVs3wQPhJZfkOhfoakBUDI 67s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

node-csr-zxgKbAQRZeVDtHvrlPh8HPFhD9wWzTr6d67V56e1dA0 84s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued

[root@master01 cert]# kubectl get node

NAME STATUS ROLES AGE VERSION

master01 NotReady 8m18s v1.21.10

master02 NotReady 108s v1.21.10

node01 NotReady 36s v1.21.10

注:由于网络插件还没有部署,节点会没有准备就绪 NotReady

部署 kube-proxy

**创建 kube-proxy 证书请求文件 **

[root@master01 cert]# cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

# 生成 kube-proxy 证书

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

创建 kubeconfig 文件

# 设置管理集群信息以及访问链接

[root@master01 cert]# kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.220.100:6535 --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

# 设置集群需要的证书

[root@master01 cert]# kubectl config set-credentials kube-proxy --client-certificate=/etc/kubernetes/ssl/kube-proxy.pem --client-key=/etc/kubernetes/ssl/kube-proxy-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

# 设置集群所需的安全上下文

[root@master01 cert]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

# 设置集群使用安全上下文

[root@master01 cert]# kubectl config use-context default --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

创建服务配置文件

同步到其他节点时需要修改对应的IP

[root@master01 cert]# cat > /etc/kubernetes/kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.220.20 # 绑定当前节点IP

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16 # kubernetes Pod 地址规划

healthzBindAddress: 192.168.220.20:10256 # 健康状态检查服务器提供服务时所使用的的 IP 地址和端口

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.220.20:10249 # 服务器提供服务时所使用的 IP 地址和端口

mode: "ipvs" # 指定使用的代理模式

EOF

创建服务启动管理文件

[root@master01 ~]# cat > /usr/lib/systemd/system/kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/opt/data/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/opt/logs/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

# 创建对应的 log 存放目录

[root@master01 ~]# mkdir /opt/logs/proxy

[root@master01 ~]# mkdir /opt/data/kube-proxy

同步配置文件到其他节点并启动服务

# 同步配置文件

[root@master01 ~]# for i in master02 node01

do

scp /etc/kubernetes/kube-proxy.* $i:/etc/kubernetes/

scp /etc/kubernetes/ssl/kube-proxy* $i:/etc/kubernetes/ssl/

scp /usr/lib/systemd/system/kube-proxy.service $i:/usr/lib/systemd/system

done

# 重新加载并启动服务

[root@master01 ~]# systemctl daemon-reload && systemctl enable --now kube-proxy

# 查看服务启动状态

root@worker1 ~]# systemctl status kube-proxy.service

网络组件

Calico YAML 部署

# 为每个节点下载 calico 文件

[root@master01 packages]# wget https://docs.projectcalico.org/v3.19/manifests/calico.yaml --no-check-certificate

# 修改配置文件

3683 - name: CALICO_IPV4POOL_CIDR

3684 value: "10.244.0.0/16" # Pod IP规划

# 引用文件

[root@master01 packages]# kubectl apply -f calico.yaml

# 验证应用结果

[root@master01 packages]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-9t6c5 1/1 Running 0 16m

kube-system calico-node-8gt8z 1/1 Running 0 55s

kube-system calico-node-9bf65 1/1 Running 0 65s

kube-system calico-node-trl6m 1/1 Running 0 75s

注意:部署安装 calica 中出现:BIRD is not ready: Error querying BIRD: unable to connect to BIRDv4 这种错误时,首先查看自己的网卡名称,修改 calica 配置文件,搜索 CLUSTER_TYPE 在其下面增加

- name: IP_AUTODETECTION_METHOD value: "interface=ens.*" # 按照实际情况填写网卡名

Flannel 二进制部署

Flannel:通过给每台宿主机分配一个子网的方式为容器提供虚拟网络,它基于 Linux TUN/TAP,使用UDP封装 IP 包来创建 overlay 网络,并借助 etcd 维护网络的分配情况

# 下载和安装 flannel 二进制文件

[root@master01 packages]# wget https://github.com/coreos/flannel/releases/download/v0.12.0/flannel-v0.12.0-linux-amd64.tar.gz

# 解压 flannel 包

[root@master01 others]# tar -xf flannel-v0.12.0-linux-amd64.tar.gz

[root@master01 others]# cp flanneld /usr/local/bin/

# 同步至其他节点

[root@master01 others]# scp {flanneld,mk-docker-opts.sh} master02:~

[root@master02 ~]# cp flanneld /usr/local/bin/

创建 flanneld 证书以及私钥

# 创建证书请求

[root@master01 cert]# cat > flanneld-csr.json <

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "NanJing",

"L": "NanJing",

"O": "k8s",

"OU": "system"

}

]

}

EOF

# 生成证书和私钥

[root@master01 cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld

# 分发至其他节点

[root@master01 cert]# cp flanneld*.pem /etc/kubernetes/ssl/

[root@master01 cert]# scp flanneld*.pem master02:/etc/kubernetes/ssl/

# 想 etcd 写入集群 Pod 网段信息

[root@master01 ~]# export FLANNEL_ETCD_PREFIX="/kubernetes/network"

[root@master01 ~]# export ETCD_ENDPOINTS="https://192.168.220.30:2379,https://192.168.220.31:2379"

[root@master01 others]# ETCDCTL_API=2 etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/ssl/ca.pem \

--cert-file=/etc/kubernetes/ssl/flanneld.pem \

--key-file=/etc/kubernetes/ssl/flanneld-key.pem \

mk /etc/kubernetes/network/config '{"Network":"172.17.0.0/16", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}'

{"Network":"172.17.0.0/16", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}

注意:使用 flanneld 给 etcd 集群添加 pod 网络配置时,因为 flanneld 0.12 版本不能给 etcd 3 进行通信,需要在 etcd 启动文件中加上

--enable-v2开启 etcd v2 API 接口再去创建 flannel 的网络配置

创建flanneld 服务的启动文件

[root@master01 others]# cat > /usr/lib/systemd/system/flanneld.service << EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/usr/local/bin/flanneld \

-etcd-cafile=/etc/kubernetes/ssl/ca.pem \

-etcd-certfile=/etc/kubernetes/ssl/flanneld.pem \

-etcd-keyfile=/etc/kubernetes/ssl/flanneld-key.pem \

-etcd-endpoints=https://192.168.220.30:2379,https://192.168.220.31:2379 \

-etcd-prefix=/etc/kubernetes/network/config \

-ip-masq

ExecStartPost=/etc/kubernetes/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker

Restart=always

RestartSec=5

StartLimitInterval=0

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

EOF

# 启动 flanneld 服务

[root@master02 ~]# systemctl daemon-reload && systemctl enable --now flanneld

# 查看服务启动状态

[root@master02 ~]# systemctl status flanneld.service

- mk-docker-opts.sh 脚本将分配给 flanneld 的 Pod 子网段信息,通过-d参数写入 /run/flannel/docker 文件,后续 docker 启动时使用这个文件中的环境变量配置 docker0 网桥, -k 参数控制生成文件中变量的名称,下面docker启动时会用到这个变量;

- flanneld 使用系统缺省路由所在的接口与其它节点通信,对于有多个网络接口(如内网和公网)的节点,可以用 -iface 参数指定通信接口;

- -ip-masq: flanneld 为访问 Pod 网络外的流量设置 SNAT 规则,同时将传递给 Docker 的变量 --ip-masq(/run/flannel/docker 文件中)设置为 false,这样 Docker 将不再创建 SNAT 规则; Docker 的 --ip-masq 为 true 时,创建的 SNAT 规则比较“暴力”:将所有本节点 Pod 发起的、访问非 docker0 接口的请求做 SNAT,这样访问其他节点 Pod 的请求来源 IP 会被设置为 flannel.1 接口的 IP,导致目的 Pod 看不到真实的来源 Pod IP。 flanneld 创建的 SNAT 规则比较温和,只对访问非 Pod 网段的请求做 SNAT

检查

# 检查分配给各 flanneld 的 Pod 网段信息

[root@master01 others]# ETCDCTL_API=2 etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/ssl/ca.pem \

--cert-file=/etc/kubernetes/ssl/flanneld.pem \

--key-file=/etc/kubernetes/ssl/flanneld-key.pem \

get /etc/kubernetes/network/config

# 输出结果:{"Network":"172.17.0.0/16", "SubnetLen": 24, "Backend": {"Type": "vxlan"}}

# 查看已分配的 Pod 子网段列表

[root@master01 ~]# ETCDCTL_API=2 etcdctl \

--endpoints=${ETCD_ENDPOINTS} \

--ca-file=/etc/kubernetes/ssl/ca.pem \

--cert-file=/etc/kubernetes/ssl/flanneld.pem \

--key-file=/etc/kubernetes/ssl/flanneld-key.pem \

ls /etc/kubernetes/network/subnets

/etc/kubernetes/network/subnets/172.17.54.0-24

/etc/kubernetes/network/subnets/172.17.66.0-24

# 检查节点 flannel 网络信息

[root@master01 network]# ifconfig flannel.1

flannel.1: flags=4163,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.66.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::186f:12ff:fe79:f54e prefixlen 64 scopeid 0x20

ether 1a:6f:12:79:f5:4e txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 15 overruns 0 carrier 0 collisions 0

# 查看路由信息

[root@master01 network]# route

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

default gateway 0.0.0.0 UG 100 0 0 ens32

172.17.0.0 0.0.0.0 255.255.0.0 U 0 0 0 docker0

172.17.54.0 172.17.54.0 255.255.255.0 UG 0 0 0 flannel.1

192.168.220.0 0.0.0.0 255.255.255.0 U 100 0 0 ens32

# 查看 flannel 生成文件信息

[root@master01 network]# cat /run/flannel/subnet.env

FLANNEL_NETWORK=172.17.0.0/16

FLANNEL_SUBNET=172.17.66.1/24

FLANNEL_MTU=1450

FLANNEL_IPMASQ=true

[root@master01 network]# cat /run/flannel/docker

DOCKER_OPT_BIP="--bip=172.17.66.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.66.1/24 --ip-masq=false --mtu=1450"

部署 CoreDNS

# coredns 配置文件

[root@master01 packages]# cat > coredns.yaml << "EOF"

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health {

lameduck 5s

}

ready

kubernetes cluster.local in-addr.arpa ip6.arpa {

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

forward . /etc/resolv.conf {

max_concurrent 1000

}

cache 30

loop

reload

loadbalance

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

# replicas: not specified here:

# 1. Default is 1.

# 2. Will be tuned in real time if DNS horizontal auto-scaling is turned on.

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

kubernetes.io/os: linux

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values: ["kube-dns"]

topologyKey: kubernetes.io/hostname

containers:

- name: coredns

image: coredns/coredns:1.8.4

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

readinessProbe:

httpGet:

path: /ready

port: 8181

scheme: HTTP

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.96.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

EOF

# 引用 coredns.yaml

[root@master01 packages]# kubectl apply -f coredns.yaml

# 查看dns pod 状态

[root@master01 packages]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-7cc8dd57d9-9t6c5 1/1 Running 0 26m

kube-system calico-node-8gt8z 1/1 Running 0 11m

kube-system calico-node-9bf65 1/1 Running 0 11m

kube-system calico-node-trl6m 1/1 Running 0 11m

kube-system coredns-675db8b7cc-4lvmc 1/1 Running 0 43s

部署应用验证

# 编写yaml文件

[root@master01 packages]# cat > nginx.yaml << "EOF"

---

apiVersion: v1

kind: ReplicationController

metadata:

name: nginx-web

spec:

replicas: 2

selector:

name: nginx

template:

metadata:

labels:

name: nginx

spec:

containers:

- name: nginx

image: nginx:1.19.6

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-service-nodeport

spec:

ports:

- port: 80

targetPort: 80

nodePort: 30001

protocol: TCP

type: NodePort

selector:

name: nginx

EOF

# 引用 nginx.yaml

[root@master01 packages]# kubectl apply -f nginx.yaml

# 查看 pod 状态

[root@master01 packages]# kubectl get all

NAME READY STATUS RESTARTS AGE

pod/nginx-web-26dmn 1/1 Running 0 78s

pod/nginx-web-phnl5 1/1 Running 0 4m7s

pod/nginx-web-sclxd 1/1 Running 0 4m7s

NAME DESIRED CURRENT READY AGE

replicationcontroller/nginx-web 3 3 3 4m7s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 443/TCP 17h

service/nginx-service-nodeport NodePort 10.96.120.18 80:30001/TCP 4m7s

# 进行访问

设置集群角色

# 将k8s-master-1设置为master角色

[root@k8s-master-2 ~]# kubectl label nodes k8s-master-1 node-role.kubernetes.io/master=

node/k8s-master-1 labeled

# 将k8s-node-1设置为node角色

[root@k8s-master-2 ~]# kubectl label nodes k8s-node-1 node-role.kubernetes.io/node=

node/k8s-node-1 labeled

# 将k8s-master-1设置master角色,一般不接受负载

[root@k8s-msater-2 ~] kubectl taint nodes k8s-master-1 node-role.kubernetes.io/master=true:NoSchedule

# 将k8s-mster-1设置master运行pod

[root@k8s-master-2 ~] kubectl taint nodes k8s-master-1 node-role.kubernetes.io/master-

# 将k8s-master-1设置master不运行pod

[root@k8s-master-2 ~] kubectl taint nodes k8s-master-1 node-role.kubernetes.io/master=:NoSchedule

部署 Ingress-nginx

helm 方式安装部署 Ingress-nginx

# 下载二进制文件

[root@master01 ~]# wget https://get.helm.sh/helm-v3.9.3-linux-amd64.tar.gz

[root@master01 ~]# tar -xf helm-v3.9.3-linux-amd64.tar.gz

[root@master01 linux-amd64]# cp helm /usr/local/bin/

# 添加 ingress-nginx 源

[root@master01 ~]# helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

# 查看源

[root@master01 ~]# helm repo list

NAME URL

ingress-nginx https://kubernetes.github.io/ingress-nginx

[root@master01 ~]# helm search repo ingress-nginx

NAME CHART VERSION APP VERSION DESCRIPTION

ingress-nginx/ingress-nginx 4.2.3 1.3.0 Ingress controller for Kubernetes using NGINX a...

# helm 下载 Ingress-nginx 包,helm pull 仓库/包

[root@master01 ~]# helm pull ingress-nginx/ingress-nginx

[root@master01 ~]# tar -xf ingress-nginx-4.2.3.tgz

# 修改配置文件

[root@master01 ~]# vim ingress-nginx/values.yaml

image:

repository: registry.cn-beijing.aliyuncs.com/dotbalo/controller

# digest: sha256:46ba23c3fbaafd9e5bd01ea85b2f921d9f2217be082580edc22e6c704a83f02f 删除镜像的hash值

dnsPolicy: ClusterFirstWithHostNet # 修改DNS策略

hostNetwork: true # 打开hostNetwork

kind: DaemonSet # 修改部署方式为DaemonSet

nodeSelector: # 添加 ingress=true 标签

kubernetes.io/os: linux

ingress: "true"

type: ClusterIP # LoadBalancer 更改为 ClusterIP

image:

repository: registry.cn-beijing.aliyuncs.com/dotbalo/defaultbackend-amd64

# 使用helm进行安装

# 为需要安装 ingress-nginx 的节点设置标签

[root@master01 ingress-nginx]# kubectl label node master02 ingress=true

# 执行安装

[root@master01 ~]# helm install ingress-nginx -n ingress-nginx .

# 查看nginx-contaroller 节点

[root@master01 ingress-nginx]# kubectl get pod -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ingress-nginx-controller-792mp 1/1 Running 1 15h 192.168.220.31 master02

编写测试用例

# 查看本地是否有svc

[root@master01 ingress-nginx]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 443/TCP 16d

nginx-service-nodeport NodePort 10.96.81.131 80:30001/TCP 7d14h

# ingress.yaml

[root@master01 ingress-nginx]# vi ingress.yaml

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx # 标注类

name: test-ingress

spec:

rules:

- host: ingress.test.com # 自定义域名

http:

paths:

- backend:

serviceName: svc-nginx # 关联svc名称

servicePort: 8091 # 关联端口

path: /

# 创建 ingress

[root@master01 ~]# kubectl apply -f ingress.yaml

# 修改 hosts 文件,只配置安装 nginx-controller 的节点

[root@master01 ~]# vim /etc/hosts

192.168.220.31 master02 ingress.test.com

# 进行访问

[root@master01 ~]# curl ingress.test.com

<!DOCTYPE html>

Welcome to nginx!<<span class="token operator">/</span>title>

<style>

html <span class="token punctuation">{</span> color-scheme: light dark<span class="token punctuation">;</span> <span class="token punctuation">}</span>

body <span class="token punctuation">{</span> width: 35em<span class="token punctuation">;</span> margin: 0 auto<span class="token punctuation">;</span>

font-family: Tahoma<span class="token punctuation">,</span> Verdana<span class="token punctuation">,</span> Arial<span class="token punctuation">,</span> sans-serif<span class="token punctuation">;</span> <span class="token punctuation">}</span>

<<span class="token operator">/</span>style>

<<span class="token operator">/</span>head>

<body>

<h1>Welcome to nginx!<<span class="token operator">/</span>h1>

<p><span class="token keyword">If</span> you see this page<span class="token punctuation">,</span> the nginx web server is successfully installed and

working<span class="token punctuation">.</span> Further configuration is required<span class="token punctuation">.</span><<span class="token operator">/</span>p>

<p><span class="token keyword">For</span> online documentation and support please refer to

<a href=<span class="token string">"http://nginx.org/"</span>>nginx<span class="token punctuation">.</span>org<<span class="token operator">/</span>a><span class="token punctuation">.</span><br/>

Commercial support is available at

<a href=<span class="token string">"http://nginx.com/"</span>>nginx<span class="token punctuation">.</span>com<<span class="token operator">/</span>a><span class="token punctuation">.</span><<span class="token operator">/</span>p>

<p><em>Thank you <span class="token keyword">for</span> <span class="token keyword">using</span> nginx<span class="token punctuation">.</span><<span class="token operator">/</span>em><<span class="token operator">/</span>p>

<<span class="token operator">/</span>body>

<<span class="token operator">/</span>html>

</code></pre>

<h3>部署 harbor</h3>

<p>官网下载 harbor 仓库: https://github.com/goharbor/harbor/releases</p>

<h4>以 https 方式部署 harbor</h4>

<p><strong>配置 hosts 文件</strong></p>

<pre><code class="prism language-powershell"><span class="token namespace">[root@localhost harbor]</span><span class="token comment"># vim /etc/hosts</span>

192<span class="token punctuation">.</span>168<span class="token punctuation">.</span>220<span class="token punctuation">.</span>32 harbor<span class="token punctuation">.</span>images<span class="token punctuation">.</span>io

</code></pre>

<p><strong>生成客户端证书</strong></p>

<pre><code class="prism language-powershell"><span class="token comment"># 创建 harbor 工作目录以及证书目录</span>

<span class="token namespace">[root@localhost data]</span><span class="token comment"># mkdir -p /opt/data/harbor/cert && cd /opt/data/harbor/cert</span>

<span class="token comment"># 生成 CA 证书私钥</span>